1. Introduction

This paper defends a new account of laws, the Nomic Likelihood Account. The motivation for this account comes from the desire for an account that satisfies five desiderata, desiderata I take to be necessary conditions on an adequate account of laws. Roughly, these desiderata are (1) providing a unified account of laws and chances, (2) entailing plausible relations between laws and chances, (3) explaining why chance events deserve the numerical values values we assign them, (4) accommodating both dynamical and non-dynamical chances, and (5) accommodating a plausible range of nomic possibilities.

The Nomic Likelihood Account satisfies all of these desiderata. In broad strokes, the nomic likelihood account proceeds as follows. First, it posits a single fundamental nomic relation—the “nomic likelihood” relation—which satisfies certain constraints. Then it characterizes laws and chances in terms of this relation. So on this account, laws and chances end up being things that encode facts about the web of nomic likelihood relations.

I’ll present the Nomic Likelihood Account in a largely theory-neutral manner. The main assumption I’ll make, following Lewis (1983), is that there’s a special subset of properties, the perfectly natural or fundamental properties, that fix all qualitative truths. Thus to describe what the world is like, it suffices to describe what there is and what fundamental properties those things have. And to provide an adequate account of some important feature of the world, one must ultimately be able to spell it out in the language of fundamental properties.1

Here is a road map for the rest of this paper. In Section 2, I spell out the desiderata on an adequate account of laws sketched above. After presenting and motivating these desiderata (Section 2.1), I suggest that none of the extant accounts of laws satisfy these desiderata, and show how several popular accounts fail to do so (Section 2.2). In Section 3, I offer an intuitive sketch of the Nomic Likelihood Account. In Section 4, I present the nomic likelihood relation and the constraints I take this relation to satisfy. In Section 5, I present a representation and uniqueness theorem showing that the pattern of instantiations of the nomic likelihood relation can be uniquely represented by things that look a lot like laws and chances (Section 5.1). This theorem has some unique features that are of independent interest: it can distinguish between nomically forbidden events and chance events that aren’t nomically forbidden (e.g., an infinite number of fair coin tosses landing heads), and it doesn’t employ the kind of “richness” assumptions that such theorems typically require. Using these results, I propose an account of laws and chances (Section 5.2), describe some features of laws and chances that follow from this account (Section 5.3), and apply the account to a toy example (Section 5.4). In Section 6, I show how the Nomic Likelihood Account satisfies the desiderata described above. In Section 7, I consider some worries for the Nomic Likelihood Account. I conclude in Section 8. Appendices A, B, and C, contain proofs of the main results.

2. Desiderata for an Adequate Account of Laws

2.1. The Desiderata

I’ll now present five desiderata that I think must be satisfied by any adequate account of laws. While I’ll briefly motivate these desiderata, I won’t engage in an extended defense of them here. Those who are inclined to contest some of these desiderata can understand my case for the Nomic Likelihood Account as taking conditional form: if one takes these to be desiderata for an adequate account of laws, then we have reason to accept something like the Nomic Likelihood Account.

Desideratum 1. An adequate account should provide a unified (and appropriately discriminating) account of laws and chances.

An adequate account of laws should provide a unified account of laws and chances. It should allow for both probabilistic and non-probabilistic laws, and it should recognize non-probabilistic laws as a limiting case of probabilistic laws. That is, it should recognize that nomic requirements/forbiddings and chances are of a kind, differing only on where they lie on the spectrum of nomic likelihood, with nomic requirements at one end, nomic forbiddings at the other, and non-trivial chances in-between. Moreover, it should do this without conflating being nomically required/forbidden with having a chance of After all, there are events that have a chance of that aren’t nomically forbidden (e.g., infinitely many fair coin tosses landing heads), and events that have a chance of that aren’t nomically required (e.g., infinitely many fair coin tosses not all landing heads).2

Desideratum 2. An adequate account should yield plausible connections between laws and chances, laws and other laws, and chances and other chances.

An adequate account of laws should yield plausible relations between laws and chances, laws and other laws, and chances and other chances. For example, it should entail that nomically required events have a chance of It should entail that something can’t be nomically forbidden and nomically required at the same time. And it should say something about how the dynamical chances at one time are related to the dynamical chances at another.

Desideratum 3. An adequate account should describe what, at the fundamental level, makes it the case that chance events deserve the numerical values they’re assigned.

An adequate account of laws should provide a satisfactory explanation for why chance events deserve the numerical values we assign them. That is, it should provide an account of the metaphysical structure underlying chances that explains why these numerical assignments are a “good fit” with the underlying metaphysical reality.

To get a feel for what this desideratum requires, let’s consider an unsatisfactory attempt to meet this demand. Suppose one tried to satisfy this desideratum by stipulating that, as a primitive fact, the world has a nomic disposition of strength to bring about one state of affairs given some other state of affairs. What, at the fundamental level, does this posit amount to?

At first glance, this would seem to amount to positing a fundamental “nomic disposition” relation between one state of affairs, another state of affairs, and the number But it’s implausible to think that, at the fundamental level, the chance facts boil down to relations to numbers of this kind. After all, the choice to assign chances values between and is purely conventional; we could assign chances using values between and or and just as well.3 A more plausible story would provide some non-numerical relations whose structure justifies these numerical assignments. But this would, of course, require doing more than simply stipulating the existence of a nomic disposition of a certain numerical strength.

Desideratum 4. An adequate account should be able to accommodate both dynamical and non-dynamical chances (like those of statistical mechanics).4

An adequate account of laws should be able to accommodate both dynamical chances—such as those of the GRW interpretation of quantum mechanics—and non-dynamical chances—such as those of statistical mechanics.5 Since statistical mechanical chances are macrostate-relative and compatible with determinism, it follows that an adequate account of laws should be able to make sense of macrostate-relative chances and non-trivial chances at deterministic worlds.6

Desideratum 5. An adequate account should be able to accommodate plausible nomic possibilities.

An adequate account of laws should be able to make sense of a plausible range of nomic possibilities. For example, it should be able to make sense of laws concerning particular locations, times, or objects, like the Smith’s garden case discussed by Tooley (1977). It should be able to make sense of uninstantiated laws, such as worlds where is a law but there are no massive objects. It should be able to make sense of world in which there is only one chance event—a coin toss, say—with a chance of of landing heads and a chance of of landing tails. And it should be able to distinguish such a world from an otherwise identical world in which the chance of heads is and the chance of tails is

While this is a desideratum that many accounts of laws and chances fail to fully satisfy (see Section 2.2), it’s most notably violated by Humean accounts—accounts on which the laws and chances supervene on the distribution of local qualities. For example, such accounts cannot make sense of uninstantiated laws, nor can they distinguish between worlds which differ only with respect to their chance assignments. Humeans take this to be a bullet worth biting in order to avoid positing fundamental nomic properties or powers. As such, Humeans won’t take desideratum 5 to be a requirement on an adequate account of laws, even though they might concede that failing to accommodate plausible nomic possibilities is a mark against their view. The debate between Humeans and non-Humeans is a long one, and I won’t attempt to settle it here. Instead, I’ll simply side with the non-Humeans, and assume that desideratum 5 is a requirement on an adequate account of laws.

2.2. Other Accounts

To my knowledge, no existing account of laws satisfies the five desiderata described above. Due to space constraints, I won’t try to provide an exhaustive discussion of the existing accounts and why they fall short. Instead, I’ll just briefly discuss seven prominent accounts, and flag the desiderata that each fails to satisfy.

1. Carroll’s (1994) primitivist account fails to satisfy desiderata 2 and 3. Carroll’s account takes what the laws and chances are to be primitive. But simply stating that such-and-such laws and chances hold doesn’t suffice to tell us what relations can hold between laws/chances and other laws/chances (desideratum 2). For example, it doesn’t tell us anything about how the dynamical chances at one time should be related to the dynamical chances at another.

Likewise, simply stating that it’s a primitive fact that a certain event has a chance of doesn’t provide a plausible story for what, at the fundamental level, makes this event deserve this numerical assignment (desideratum 3). At first glance, the claim that it’s a fundamental fact that a certain event has a chance of seems to be asserting that some kind of fundamental relation holds between that event and a number. But as we saw in Section 2.1, this story is deeply implausible. Alternatively, one might understand claims about numerical chance assignments as concise ways of describing some more fundamental non-numerical structure that underlies these numerical assignments. But such a story requires a description of what this more fundamental non-numerical structure is, and Carroll’s account doesn’t provide us with these details.7

2. Lewis’s (1994) best system account of laws fails to satisfy desiderata 4 and 5. Lewis’s account requires all chances to be dynamical chances, and so fails to satisfy desideratum 4.8 And as a Humean account—an account which takes the laws to supervene on the distribution of local qualities—it fails to satisfy desideratum 5, since it’s unable to accommodate a plausible range of nomic possibilities. For (as we saw in Section 2.1) there are plausible nomic possibilities—such as pairs of worlds that are identical with respect to the distribution of local qualities but different with respect to the chances—that Humean accounts cannot recognize.

3. Armstrong’s (1983) universalist account fails to satisfy desiderata 2, 3 and 5. On one natural reading of Armstrong’s account, it takes the nomic facts to be entailed by infinitely many fundamental necessitation relations—each intuitively corresponding to a different chance value—which hold between pairs of fundamental properties (universals) and 9 Armstrong’s account fails to satisfy desideratum 3 because it doesn’t provide these necessitation relations with any structure that would justify one numerical assignment over any other. For example, nothing about the account tells us whether the necessitation relation is stronger than the necessitation relation or whether is closer in strength to than or whether is twice as strong as In a similar vein Armstrong’s account fails to satisfy desideratum 2, since it doesn’t say enough about these necessitation relations to determine what, for example, the relation between dynamical chances at different times is. Finally, Armstrong’s account rules out plausible nomic possibilities (desideratum 5), since it rules out the possibility of worlds with uninstantiated laws or chances (such as a world where Newton’s gravitational force law holds but there are no masses).10

4–5. Swoyer’s (1982) necessitarian account and Lange’s (2009) counterfactual account both fail to satisfy desiderata 1, 2 and 3. While these accounts differ in a number of ways, they are similar in that they both don’t take chances to be part of the laws. Instead, they take chances to be just another quantitative property like mass or charge, and their accounts say little more about what chances are like. As a result, these accounts fail to satisfy the first three desiderata: they fail to provide a unified account of laws and chances (desideratum 1), they fail to yield plausible relations between laws/chances and other laws/chances (desideratum 2), and they fail to explain what, at the fundamental level, makes chance events deserve the numerical values they’re assigned (desideratum 3).11

The preceding discussion suggests that most extant accounts of laws have particular trouble satisfying desiderata 2 and 3. This is likely because these accounts have largely focused on non-probabilistic laws, with probabilistic laws being something of a sideshow. So I’ll conclude by assessing two accounts of chances that do better with respect to desiderata 2 and 3. Since these accounts are only intended as accounts of chance, they won’t provide a unified account of laws and chances (desideratum 1), nor say everything we’d like about how laws/chances bear on other laws/chances (desideratum 2). But it’s worth seeing how they fare.

6. Suppes’s (1973) propensity account of chances fails to satisfy desiderata 1, 2 and 3, though it does better with respect to desideratum 3 than the other accounts we’ve considered. Suppes takes an “at least as probable than” relation as primitive, imposes certain constraints on this relation, and then uses these constraints to provide representation theorems for various kinds of probabilistic phenomena, such as radioactive decay and coin tosses.12 These representation theorems show, roughly, that one can assign numerical values to chance events that will line up with the “at least as probable than” relation and satisfy the probability axioms.

Suppes’s account fails to satisfy desiderata 1 and 2 for the reasons given above—since it only provides an account of chances, not laws and chances, it doesn’t provide a unified account of laws and chances, or the relationships between them. Moreover, Suppes’s account doesn’t provide a unified account of chances. For Suppes takes different probabilistic phenomena to impose different kinds of constraints, and goes on to provide different representation theorems for these different phenomena. Thus Suppes’s account of chances is highly heterogeneous.13

Suppes’s account does better with respect to desideratum 3, making substantial progress with respect to explaining what, at the fundamental level, makes chance events deserve the numerical values they’re assigned. Unfortunately, it still falls short of providing a satisfactory justification. For while Suppes’s approach yields a representation theorem, it doesn’t yield the uniqueness theorem required to show that these numerical representations are unique. Thus this account doesn’t justify our assigning the particular numerical values that we do.

7. Konek’s (2014) propensity account of chances fails to satisfy desiderata 1, 2 and 5. Konek’s account employs a primitive “comparative propensity ordering” that satisfies certain constraints, and then uses these constraints to provide a representation and uniqueness theorem. Thus we finally have an account which fully satisfies desideratum 3—an account that explains what, at the fundamental level, makes chance events deserve the numerical values we assign them.

But Konek’s account fails to satisfy desiderata 1 and 2 for reasons we’ve already seen—since it’s not an account of laws and chances, just chances, it doesn’t provide a unified account of laws and chances, or describe the relations that hold between them. Moreover, Konek’s account also doesn’t yield all of the relations between chances that one would like. For example, it doesn’t say anything about how dynamical chances at different times are related.14

Finally, Konek’s account fails to recognize some plausible nomic possibilities (desideratum 5). It seems possible for there to be a world with only one chance event—a coin toss—with a chance of of landing heads (cf. Section 2.1). And this possibility seems distinct from an otherwise identical world where the chance of heads is But on Konek’s account neither of these worlds are possible—the comparative propensity ordering facts that line up with these numbers will be too weak to yield a precise numerical chance assignment, so Konek’s account will take such worlds to have imprecise chances. And since the comparative ordering facts that line up with these numbers will be the same in both worlds, Konek’s account can’t recognize these possibilities as distinct.

3. The Nomic Likelihood Account (I): The Intuitive Picture

Let’s start by sketching the intuitive picture behind the Nomic Likelihood Account.

It’s natural to think that laws and chances are of a kind. Deterministic laws tell us that if one state of affairs obtains, then another state of affairs is nomically required to obtain. Chances tell us that if one state of affairs obtains, then another state of affairs has a certain nomic likelihood of obtaining. And nomic requirements and nomic likelihoods seem to be instances of the same kind of thing. Nomic requirements are just what you get when you turn the nomic likelihood “all way up”.

Now, the nomic likelihood of one state of affairs given another is a quantitative feature of the world. You can have different degrees of nomic likelihood. And these degrees can be characterized in precise, numerical ways—one state of affairs can be twice as likely as another, for example. So what undergirds these quantitative features of the world? What’s the metaphysical structure underlying nomic likelihoods?

The view I propose takes its cue from a popular account of quantitative properties like mass.15 Consider an object that has a certain amount of mass. What undergirds the fact that it has that quantity of mass? According to one popular account, it’s the mass relations that hold between the object and all other massive objects. For example, this object might be more massive than some objects, and less massive than others. And it’s this web of mass relations that fixes the particular amount of mass the object has. What it is for an object to have a particular amount of mass is just for it to bear the right relations of this kind to everything else.

The Nomic Likelihood Account adopts a similar approach to nomic likelihood. In the case of mass, what bears a quantity of mass is an object.16 In the case of nomic likelihood, what bears a quantity of nomic likelihood is a pair of states of affairs—given this state of affairs, there’s such-and-such likelihood of this other state of affairs coming about. Or, if we factor in the fact that these likelihoods can vary from world to world, what bears a quantity of nomic likelihood is a triple—a pair of states of affairs and a world.

Now consider a triple that has a certain nomic likelihood—at this world, given this state of affairs, there’s such-and-such likelihood of this other state of affairs coming about. What undergirds the fact that this triple has that nomic likelihood? According to the Nomic Likelihood Account, it’s the relations that hold between that triple and all other triples that have nomic likelihoods. For example, this triple might be more nomically likely than some triples, and less nomically likely than others. And it’s this web of nomic likelihood relations that fixes the particular amount of nomic likelihood this triple has. What it is for a triple to have a particular nomic likelihood is just for it to bear the right relations to other triples.17

Of course, a satisfying account has to do more than just gesture at certain relations. Return to the case of mass. A satisfying account of quantities of mass has to do more than gesture at some mass relations. It has to tell us what these relations are, what these relations are like, and how these relations vindicate taking masses to be quantitative, i.e., vindicate assigning numerical values to these quantities in the way that we do. And this is what accounts of quantitative properties like mass do. They propose certain fundamental mass relations, present some “axioms” that describe how these relations behave, and provide a representation and uniqueness theorem showing that these relations vindicate our using numbers to represent the amount of mass things have in the way that we do.

Providing a satisfying account of nomic likelihood requires doing something similar. We need to spell out what the fundamental relations are, what these relations are like, and how these relations vindicate assigning numerical values to chances in the way that we do. This is what I’ll do in the next two sections. I’ll spell out the fundamental nomic likelihood relation, present some “axioms” describing how this relation behaves, and provide a representation and uniqueness theorem showing that these relations vindicate our using numbers to represent amounts of nomic likelihood in the way that we do. And with an account of nomic likelihood in hand, it’s straightforward to provide an account of laws and chances.

While proponents of the Nomic Likelihood Account can remain neutral about many metaphysical debates, it’s hard to sketch an intuitive picture of the view in a theory-neutral manner. So I’ve made some assumptions in this Section while presenting the picture; for example, I’ve appealed to things like Chisholm-style states of affairs. But these aren’t assumptions that the Nomic Likelihood Account is wedded to; we’ll return to discuss some alternative approaches in Section 7.18

4. The Nomic Likelihood Account (II): The Posit

In this Section I’ll present the key posit of the Nomic Likelihood Account, the nomic likelihood relation. In Section 4.1 I’ll introduce the nomic likelihood relation. In Section 4.2 I’ll introduce some helpful terminology. In Section 4.3 I’ll describe the constraints (i.e., axioms) that I take the nomic likelihood relation to satisfy.

Two comments before we get started. First, in Section 3 I talked about nomic likelihoods in terms of states of affairs. As it turns out, it will be formally more convenient to characterize nomic likelihoods in terms of propositions instead of states of affairs. But this is purely for convenience—we could formulate everything in terms of states of affairs instead, albeit in a slightly clunkier way.19 In what follows I’ll assume that a proposition can be identified with the set of possible worlds at which it’s true.20 I’ll take to be the set of all possible worlds, i.e., the trivially true proposition that some possibility obtains, and I’ll take to be the empty set, i.e., the trivially false proposition that no possibility obtains.

Second, it’s worth saying something about the representation and uniqueness theorem this approach employs in order to help the reader understand the motivation for some of the axioms. The measurement theory literature contains a number of representation and uniqueness theorems which take an ordering relation that satisfies certain constraints, and show that there’s a unique numerical representation that lines up with that relation. Given this, working out the axioms of the nomic likelihood relation and providing a representation and uniqueness theorem for it seems like a straightforward task. All that’s required to complete this project, it seems, is to take one of these formal results and change its interpretation.

Unfortunately, none of the results in the literature can do the work required, for two reasons. First, none of the results in the literature I’m aware of can distinguish between having a probability of and being required to be true. Or, given the interpretation we’re interested in, can distinguish between having a chance of and being nomically required. So while these results provide us with something to identify chances with, they don’t provide us with something to identify nomic requirements with. (Recall that we can’t just take nomic requirements to be the things that have a probability of for there are things which have a probability of that aren’t nomically required—e.g., an infinite number of fair coin tosses not all landing heads.) In order to satisfy the first desideratum of Section 2.1, we need an account that can make such distinctions.

Second, all of the theorems in the literature I know of require strong “richness” assumptions in order to derive their result.21 These richness assumptions impose strong constraints on the probability function, such as, e.g., that for every value in the unit interval, there’s something that has that probability. This rules out plausible nomic possibilities like there being a world with only a single chance event, e.g., a coin toss, which has a chance of of heads and a chance of of tails. In order to satisfy the fifth desideratum of Section 2.1, we need an account that can recognize such possibilities.

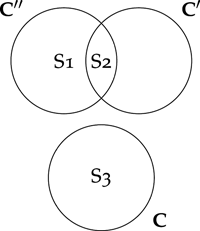

The framework I’ll present will allow us to distinguish between having a chance of and being nomically required. It does so by introducing, in addition to the unique largest and smallest nomic likelihoods unique next largest and next smallest likelihoods. Likewise, the framework I’ll present doesn’t need to posit the kind of richness axioms the existing theorems require. This is because it introduces cross-world relations that effectively allow us to “import” richness from other worlds. Of course, these changes require replacing many of the standard axioms that the results in the literature employ, and showing that we can still derive everything we want from their replacements.

4.1. The Nomic Likelihood Relation

Here is the fundamental posit of the Nomic Likelihood Account:

The Nomic Likelihood Relation: There exists a fundamental six-place nomic likelihood relation, given at is at least as nomically likely as given at that satisfies the 12 nomic axioms (cf. Section 4.3), where are worlds, and are propositions that supervene on the fundamental properties and relations other than

The last clause ensures that the propositions the nomic likelihood relation holds of aren’t themselves about nomic facts. I take this constraint to be independently plausible, and it ensures that we won’t run into self-reference paradoxes. Now let’s turn to the 12 nomic axioms that the nomic likelihood relation is required to satisfy.

4.2. Terminology

Let me start by introducing some terminology.

Let be an ordered triple consisting of a pair of propositions and a world I’ll call and the antecedent and consequent propositions of the triple, respectively. When expressing such triples, everything that’s bolded should be understood as describing the consequent proposition of the triple. E.g., is a triple whose consequent proposition is whose antecedent proposition is and whose world is When talking about triples which share the same indices, I’ll leave the indices implicit.

At the risk of abusing notation, I’ll often express the nomic likelihood relation in terms of these triples. Thus I’ll use as shorthand for Using this notation, we can define the “more nomically likely than” relation as follows: iff and Likewise, we can define the “nomically on a par” relation as follows: iff and

Let (for “nomic space”) be the set of all triples such that and are either the first three or last three arguments of some instantiation of Intuitively, is the set of all triples that have nomic likelihoods.

Let the be the subset of containing all the triples with and as their second and third members. Intuitively, and pick out a situation, and the identifies the consequent propositions that nomic likelihoods are assigned to in that situation. For example, if and pick out a chance distribution, the will consist of the triples whose consequent propositions are assigned chances by this distribution. Note that clusters can be “gappy”, in the sense that for some propositions the won’t contain This is because, holding and fixed, there can be nomic constraints on some consequent propositions but not others. For example, and might pick out a chance distribution which assigns chances to propositions about the behavior of particles, but not to propositions about the behavior of incorporeal spirits. Likewise, note that clusters can be empty. For example, if is a lawless world, then the will be empty, since no triples of the form are assigned nomic likelihoods.

With this notation in hand, let’s turn to the 12 nomic axioms.

4.3. The Nomic Axioms

1. We haven’t imposed any constraints on which consequent propositions are assigned nomic likelihoods in an For example, as it stands, it could be the case that is assigned a nomic likelihood but is not; or that and are assigned nomic likelihoods but is not. The first axiom ensures that the consequent propositions that are assigned nomic likelihoods are closed under natural operations like negation and disjunction. E.g., it ensures that if given certain meteorological conditions at world there’s some nomic likelihood of it raining then there’s also some nomic likelihood of it not raining and if there’s some nomic likelihood of it raining and some nomic likelihood of it snowing then there’s also some nomic likelihood of it raining or snowing

Axiom 1

If is in then is in

If are in then is in

Formally, this axiom ensures that for every non-empty the consequent propositions in that cluster form a

2. Nothing we’ve said so far requires all triples with nomic likelihoods to be comparable, or requires comparisons between triples to be transitive. For all we’ve said, it could be the case that it raining (given meteorological conditions at is more nomically likely than it snowing (given at and it snowing (given at is more nomically likely than it being sunny (given at but it raining (given at is neither more nomically likely than, less nomically likely than, or on a par with, it being sunny (given at The second axiom rules this out, by ensuring that all triples with nomic likelihoods are comparable, and that these comparisons are transitive.

Axiom 2 (Weak Order):

is connected: for all in either or

is transitive: for all in if and then

Formally, this axiom ensures that the nomic likelihood relation provides a weak ordering of

3. The previous axioms haven’t imposed any constraints on how the nomic likelihoods assigned to members of different line up with each other. For example, as it stands, it could be that all the triples in one cluster are more nomically likely than all the triples in another. The third axiom ensures that triples whose consequent propositions are trivially true or trivially false have the same nomic likelihoods in all Intuitively, this ensures that the “ceiling” and “floor” of nomic likelihoods is the same at all clusters.

Axiom 3 (Cross-algebra Comparisons):

If and are in

If and are in

4. So far, nothing we’ve said requires there to actually be any triples with nomic likelihoods. For all we’ve said, it could be the case that all are empty. And even if we assume there are non-empty clusters, nothing we’ve said requires them to be fine-grained. E.g., it could be the case that every triple which has a nomic likelihood is on a par with (say) one of three triples, entailing that there are effectively only three degrees of nomic likelihood. And even if we assume there is a cluster with a rich range of nomic likelihoods, nothing we’ve said requires these nomic likelihoods to be fine-grained enough to distinguish between consequent propositions that are nomically required and ones which are “just” overwhelmingly likely (e.g., that at least one of infinitely many fair coin tosses lands heads). The fourth axiom imposes “richness” requirements that ensure there’s an appropriately fine-grained range of nomic likelihoods.

Axiom 4 (Rich Algebra): There exists a particular cluster, call it (for “rich”), with the following features:

-

There is a pair of triples in call them and such that:

(a)

(b) For all such that

(c) For all such that

-

There are no in such that, for any in such that either:

(a)

(b)

(c)

(d) and

For any and in such that there’s some and in such that and

This is an important axiom, so it’s worth talking through what it says in a bit more detail. This axiom posits the existence of a “rich” cluster, The three clauses of this axiom ensure that is rich in three different ways. (This axiom is compatible with there being multiple clusters which satisfy these clauses. But is a name for a particular one of them.)

The first clause entails that in this rich cluster there’s (i) a “next highest” rank of nomic likelihood, which sits below but above every other rank, and (ii) a “next lowest” rank of nomic likelihood, which sits above but below every other rank. I use the names and for some particular triples in that have these ranks. (This clause is compatible with there being multiple triples in which have these ranks. But and are names for a particular pair of them.)

It’s worth emphasizing that and are names for two particular triples in not names for the consequent propositions of some triples whose indices have been left implicit. (E.g., I’m not using as shorthand for is not the name of a proposition.) Thus and will never be expressed with indices; the second and third elements of these triples are fixed.

In what follows, it will be convenient to have a name for triples whose rank is such that I’ll say that such triples have a middling rank.

The second clause is the analog of the standard “atomless” assumption.22 Roughly, it ensures that in this rich cluster, any triple of at least middling rank can be always be decomposed into smaller triples of middling rank.

The third clause ensures that every degree of nomic likelihood is instantiated in That is, it entails that is rich enough to be such that every triple in is nomically on a par with some triple in 23

5. Intuitively, nomic likelihoods should satisfy something like a qualitative notion of additivity. For example, given meteorological conditions at world if it raining is more nomically likely than it snowing then it raining or being sunny should be more nomically likely than it snowing or being sunny 24 The fifth axiom ensures that nomic likelihoods will satisfy this kind of additivity requirement.

Axiom 5 (Restricted Cross-algebra Additivity): Suppose that that and that none of the following three conditions hold: (i) (ii) (iii) Then iff

It’s worth flagging two ways in which this qualitative additivity axiom differs from typical qualitative additivity axioms. First, typical qualitative additivity axioms don’t include conditions (i)–(iii). But the introduction of and requires the additivity claim to be restricted to cases where none of conditions (i)–(iii) hold.25 Second, typical qualitative additivity axioms effectively only apply within a single cluster. But in order to “import” richness facts from other clusters, we need the additivity claim to apply to triples belonging to different clusters.26

6. The sixth axiom plays an important role in establishing the representation and uniqueness theorem, but it’s a bit harder to get an intuitive grip on than the other axioms. Consider a sequence of triples from some cluster that’s “expanding”, in the sense that the consequent proposition of each triple in the sequence is entailed by the consequent propositions of all the earlier members of the sequence. And suppose some other triple is more nomically likely than any triple in this sequence. Then it’s natural to think that should also be more nomically likely than a triple whose consequent proposition is the disjunction of all of the consequent propositions in this sequence. This is what the sixth axiom requires.

Axiom 6 (Continuity): If for all and then

Formally, this axiom ensures that the relation is monotonically continuous.

7. So far we’ve said little about how the nomic likelihoods of triples on a par with and behave. For example, given conditions at world suppose the nomic likelihood of a certain coin landing heads is middling, the nomic likelihood of an independent sequence of infinitely many coins all landing heads is on a par with and the nomic likelihood of at least one coin in this infinite sequence landing tails is on a par with How does the nomic likelihood of the coin landing heads compare to that of the coin landing heads or the infinite sequence of coins all landing heads Likewise, how does the nomic likelihood of the coin landing heads compare to that of the coin landing heads and at least one of an independent infinite sequence of coins landing tails The seventh axiom settles the answer to these questions, holding in both cases that the likelihoods are the same.

In particular, the seventh axiom entails that adding things on a par with can only result in a change of likelihood in extremal cases, when it’s added to something on a par with or Likewise, it entails that intersecting things on a par with can only result in a change of likelihood in extremal cases, when it’s intersecting something on a par with or

Axiom 7 Differences):

If and then

If and then

8. We haven’t yet imposed any requirements tying nomic likelihood to truth. For all we’ve said, it could be the case that if meteorological conditions hold at world then it’s maximally likely that it will rain and meteorological conditions do hold at and yet it doesn’t rain at The eighth axiom ensures that nomic likelihood is tied to truth in the way we’d expect.

Axiom 8 (Ω Instantiation): If and then

9. Nothing we’ve said so far has imposed conditions tying the fact that if obtained at then would have a certain likelihood to the possibility of obtaining. Consider the set of worlds containing all the worlds that assign the same nomic likelihoods as world (I.e., if then for all in iff And suppose that given meteorological conditions at a world in there’s a certain nomic likelihood of rain As it stands, this could be true even though there’s no world in at which conditions hold. One might take this to be implausible. If there’s a certain likelihood of rain given certain meteorological conditions at then there should be some nomically similar world where those meteorological conditions obtain. The ninth axiom ensures that this is the case.

Axiom 9 (Antecedent Instantiation): If is in then there exists a such that for all in iff

10. Nothing we’ve said so far has imposed conditions tying the fact that if obtained at then would have a middling likelihood to the possibility of obtaining. Suppose that given meteorological conditions at a world in there’s a middling nomic likelihood of rain As things stand, it could be the case that it rains at every world in where obtains, even though it only has a middling likelihood of doing so. Likewise, it could be the case that it doesn’t rain at any world in where obtains, even though it has a middling nomic likelihood of doing so. Both scenarios are implausible: if there’s a middling likelihood of rain, then there should be some in where it rains, and some where it does not. The tenth axiom ensures that this is the case.

Axiom 10 (Chancy Instantiation): If then there exists a and such that:

For all in iff iff

and

and

11. The previous axioms haven’t imposed any constraints on what triples there are in different indexed to the same world. Suppose that given meteorological conditions at there’s a middling likelihood of it raining the next day and a middling likelihood of it raining the day after that And consider the nomic likelihoods that might obtain at given those meteorological conditions and that it rains the first day For all we’ve said so far, it could be that given at there’s a maximal likelihood assigned to it raining the first day but no likelihood at all—whether high or low—assigned to it raining the second day That is, it could be that the is simply silent about the likelihood of it raining the second day. This is odd. If the assigns a nomic likelihood to it seems the should as well. The eleventh axiom ensures this, by requiring clusters at the same world to have consequent propositions that line up with each other.

Axiom 11 (Same Algebra): Suppose that that and that the is not empty. Then is in iff is in 27

12. Axiom 11 ensures that clusters at the same world have consequent propositions that line up with each other. But while axiom 11 ensures that these clusters will assign nomic likelihoods to the appropriate propositions, we haven’t yet said anything about what the magnitudes of these nomic likelihoods should be. Suppose that given meteorological conditions at there’s a middling likelihood of it raining the next day a middling likelihood of it raining the day after that and a smaller but still middling likelihood of it raining both days Given those meteorological conditions and that it rains the next day what should the likelihood of it raining both days be? For all we’ve said so far, it could be anything, including on a par with the trivially true proposition or the trivially false proposition This is implausible: the likelihood of it raining both days should be middling. The twelfth axiom ensures this, by requiring the nomic likelihoods assigned by same-world clusters to line up in the way you’d expect.

Formulating the twelfth axiom precisely requires a little stage-setting. Let an n-equipartition of a cluster be a set of triples which are all nomically on a par with each other, and whose consequent propositions are mutually exclusive and exhaustive.28 Let be a function such that: iff for any of the rich cluster and any in

= if

= if and

= if

Intuitively, takes a natural number and a triple and spits out a natural number indicating that the nomic likelihood of is at least that of but less than that of Thus if we know the nomic likelihood of is less than of if we know the nomic likelihood of is at least but less than of and so on; and if we know the nomic likelihood of is at least of i.e., is exactly that of

Axiom 12 (Algebra Coordination): Suppose that and are in If and if it’s not the case that there’s some such that for all or then:

This axiom ensures that if the and the agree on the proportion of nomic likelihood that contributes to likelihood.

Some key lemmas that follow from the axioms are described in appendix A.1. The proofs of these lemmas are given in appendix A.2.

5. The Nomic Likelihood Account (III): The Account

In this Section I finish developing the Nomic Likelihood Account. In Section 5.1 I’ll present a representation and uniqueness theorem regarding the nomic likelihood relation. In Section 5.2, using these results, I’ll present the Nomic Likelihood Account of laws and chances. In Section 5.3 I’ll present some consequences of this account regarding laws and chances. And in Section 5.4 I’ll present a toy example of some complete laws given the Nomic Likelihood Account.

Before we proceed, it’s worth sketching the role that the representation and uniqueness theorem plays in this account. It’s helpful to start with an analogy. In the decision theory literature, people have offered representation and uniqueness theorems showing that if a subject’s preferences satisfy certain conditions, then there’s a (more or less) unique pair of functions that line up with these preferences in the way you’d expect rational credences and utilities to line up with them. One popular account of credences and utilities identifies them with the functions picked out by these theorems.29 On this account, credences and utilities are just things that encode facts about a subject’s preferences. And if we adopt this account, the theorem provides a straightforward explanation for why credences and utilities deserve the numerical values we assign them—because these are the only numerical assignments that line up with preferences in the right way.

Similarly, the representation and uniqueness theorem described in Section 5.1 shows that if the nomic likelihood relation satisfies certain conditions, then there’s a unique function and pair of relations that line up with these nomic likelihood relations in the way you’d expect chances and nomic requirements/forbiddings to line up with them. The Nomic Likelihood Account identifies chances and nomic requirements/forbiddings with the function and relations picked out by the theorem. On this account, chances and nomic requirements/forbiddings are just things that encode facts about the web of nomic likelihood relations. And if we adopt this account, the theorem provides a straightforward explanation for why chances deserve the numerical values we assign them—because these are the only numerical assignments that line up with the nomic likelihood relations in the right way.

5.1. The Representation and Uniqueness Theorem

We can partition the space of worlds such that two worlds and are in the same cell of the partition iff, for all and all in iff Intuitively, two worlds are in the same cell of this partition iff the same nomic facts hold at both worlds. I’ll call this the nomic partition. I’ll use etc., to denote different cells of this partition, and to denote the cell is in.

The following theorem is shown in appendix B:30

The Representation and Uniqueness Theorem: If satisfies the nomic likelihood axioms, then there’s a unique function (that takes three propositions and as arguments, and spits out a real number between and and a unique pair of three-place relations and (that hold between a pair of propositions and and a world 31 such that:

iff for any and either:

(a)

(b) and and

(c) and and

iff

iff

Furthermore, the function will be a countably additive probability function.32

This theorem shows that the nomic likelihood relation can be uniquely represented by a countably additive probability function which assigns numbers that line up with the nomic likelihood relation, and a pair of relations (nomically required) and (nomically forbidden) that hold between the members of a triple iff it’s maximally or minimally nomically likely, respectively.

5.2. The Account of Laws and Chances

Given the representation and uniqueness theorem, we can provide an account of laws, chances, and nomic requirements and forbiddings, as follows.

Complete Laws of Nature: A world has complete laws of nature iff 33

It will be convenient to follow Lewis (1979) and identify properties with the set of possible individuals that instantiate them. Since the property of being a world with laws picks out the same set of worlds as the proposition that laws obtain, it follows that Thus we can refer to the laws as both properties and propositions, since they’re both.

The Nomic Likelihood Account then identifies chances, nomic requirements and nomic forbiddings with the function and and relations provided by the representation and uniqueness theorem:

Chances: The chance of given complete laws and antecedent is iff

Nomic Requirements: If holds at then is nomically required to hold at iff

Nomic Forbiddings: If holds at then is nomically forbidden from holding at iff

5.3. Some Lemmas Regarding Laws and Chances

The second desideratum discussed in Section 2.1 was that an adequate account should yield plausible connections among laws and chances. We can now show some of the ways in which the Nomic Likelihood Account satisfies this desideratum by describing some further lemmas that follow from the nomic axioms described in Section 4.3, and the account of laws and chances offered in Section 5.2. (The numbering of these lemmas starts at 10 because they follow the 9 lemmas given in appendix A.1. The derivations of these lemmas are given in appendix C.)

10. If (given at there’s some likelihood of and entails then it seems should be nomically required. E.g., suppose there’s some nomic likelihood of rain given that it’s raining hard at Then, given that it’s raining hard at it should be nomically required that it rains. This is what the tenth lemma shows.

Lemma 10: If is in and entails then

11. It seems like nomic requirements should be closed under entailment. For example, if (given at it’s nomically required that it be rainy and nomically required that it be windy then it should be nomically required that it be rainy and windy This is what the eleventh lemma says.

Lemma 11: For all in If entail and then

12. It seems nomic requirements and nomic forbiddings should be linked: if is nomically required, then should be nomically forbidden, and vice versa. For example, if (given at it’s nomically required that it rain then it should be nomically forbidden that it not rain and vice versa. This is what the twelfth lemma states.

Lemma 12: iff

13. It seems like nomic requirements and forbiddings should be tied to the truth. For example, if (given at rain is nomically required, and obtains, then it should rain. Likewise, if (given at rain is nomically forbidden, and obtains, then it shouldn’t rain. This is what the thirteenth lemma asserts.

Lemma 13:

If and then

If and then

14. It seems nomic likelihoods should be tied to chances. For example, if (given at the nomic likelihood of rain is on a par with or then the chance of rain (given and the laws that hold at should be Likewise, if the nomic likelihood of rain is on a par with or then the chance of rain should be And if the nomic likelihood of rain is middling, then the chance of rain should be greater than but smaller than This is what the fourteenth lemma says.

Lemma 14:

If then

If then

If

15. It seems related chance distributions should assign chances to the same propositions. For example, suppose and yield a well-defined chance distribution over a sequence of fair coin tosses. And suppose the conjunction of and the first fair coin toss landing heads (call this conjunction and also yield a well-defined chance distribution. It would be strange if assigned chances to coin tosses that did not assign chances to, or vice versa. Rather, it seems and should assign chances to the same propositions. This is what the fifteenth lemma says.

Lemma 15: If and is well-defined, then for all is well-defined iff is well-defined.

16. It seems related chance distributions should have related chance assignments. For example, suppose and yield a well-defined chance distribution over a sequence of independent coin tosses, and this distribution assigns a chance of to the first coin landing heads and chance of to the first two coin tosses landing heads And suppose the conjunction of and the first fair coin toss landing heads—i.e., also yield a well-defined chance distribution. What chance should assign to the first two coin tosses landing heads? Given the chances assigns, it seems the right answer is This is what the sixteenth lemma entails.

Lemma 16: If and and are well-defined, then

5.4. A Toy Example

It can be helpful to see a concrete example of some complete laws on the Nomic Likelihood Account. But it’s hard to do so concisely for realistic physical theories. So I’ll instead present a toy example corresponding to a pair of cases discussed in Section 2.1: a pair of worlds in which there’s only one chance event, a coin toss, where the chance of heads is in one world, and in the other.

Let be a proposition describing the state of a world at consisting of a certain coin toss set-up, and let be a proposition stating that the outcome of this coin toss was heads. Let be a world such that there are only four triples indexed to in and Let be on a par with the triples in the rich cluster that are assigned a value of by the representation and uniqueness theorem.

The complete laws of will consist of the set of worlds in cell of the nomic partition. And these laws describe a world in which there’s almost nothing of nomic interest going on: there’s only a single non-trivial chance event—a coin toss—which has a chance of of landing heads.

We can also consider a world such that the only triples indexed to in are: and And in this case, is on a par with the triples in the rich cluster that are assigned a value of The complete laws will consist of the set of worlds with the same nomic facts as and these laws describe a world in which there’s only a single non-trivial chance event—a coin toss—which has a chance of of landing heads.

6. The Nomic Likelihood Account and the Desiderata

Now let’s turn to see how the Nomic Likelihood Account fares with respect to the five desiderata given in Section 2.1.

Desideratum 1. An adequate account should provide a unified (and appropriately discriminating) account of laws and chances.

The Nomic Likelihood Account provides a unified account of laws and chances, characterizing both in terms of the nomic likelihood relation (cf. Section 5.2). Probabilistic and non-probabilistic laws are treated similarly, with the laws that impose nomic requirements just being stronger versions of the laws that impose chances. And the Nomic Likelihood Account is appropriately discriminating, distinguishing between propositions that are nomically required and propositions that have a chance of but aren’t nomically required.

Desideratum 2. An adequate account should yield plausible connections between laws and chances, laws and other laws, and chances and other chances.

The Nomic Likelihood Account yields the kinds of relations between laws and chances that one would expect (cf. Section 5.3). For example, it entails that nomically required propositions are not nomically forbidden, and vice versa; it entails that nomic requirements are closed under entailment;34 it entails that nomically required propositions will have a chance of and nomically forbidden propositions a chance of it entails that chance distributions at the same world will be related by conditionalization; and so on.

Desideratum 3. An adequate account should describe what, at the fundamental level, makes it the case that chance events deserve the numerical values they’re assigned.

The Nomic Likelihood Account provides a satisfactory explanation for why chance events deserve the numerical values we assign them. At the fundamental level we have various instantiations of the nomic likelihood relation which satisfy certain constraints (cf. Sections 4.1 and 4.3). And we have a representation and uniqueness theorem that shows that there is exactly one way of assigning numbers in the to propositions so that these assignments line up with these nomic likelihood relations (cf. Sections 5.1 and 5.2). Since the Nomic Likelihood Account identifies chances with these assignments, it provides an explanation for why chance events deserve the numerical values we assign them.

Desideratum 4. An adequate account should be able to accommodate both dynamical and non-dynamical chances (like those of statistical mechanics).

The Nomic Likelihood Account itself doesn’t appeal to a distinction between “dynamical” and “non-dynamical” chances. But we can distinguish between different kinds of chances, and see what the Nomic Likelihood Account entails about them.

Here is one way to draw such a distinction. Let’s say that a world has non-trivial chances iff there are middling likelihood triples indexed to Call these chances dynamical iff all of the middling likelihood triples indexed to have an antecedent proposition describing a complete history up to some time.35 Call these chances non-dynamical iff they’re not dynamical.36

Given this characterization of dynamical chances, the Nomic Likelihood Account will entail that dynamical chances will have the features they’re expected to have. For example, the Nomic Likelihood Account will entail that worlds with dynamical chances can’t have deterministic laws. If has deterministic laws, then every likelihood-having triple indexed to that has a complete history as its antecedent proposition will either be nomically required or nomically forbidden (depending on whether and entail the triple’s consequent proposition or its negation). Since none of these triples have a middling likelihood, it follows that can’t have dynamical chances.37

Likewise, the Nomic Likelihood Account will entail that at worlds with dynamical chances, propositions about the past can only be assigned a chance of or Let be a world with dynamical chances, a history up to and some proposition about what the world is like prior to such that has some likelihood. By construction will entail either or from which it follows (by lemmas 10 and 12) that is either nomically required or nomically forbidden. Thus (by lemma 14) the chance of is either or

By contrast, the Nomic Likelihood Account will allow worlds with deterministic laws to have non-dynamical chances, and so can accommodate classical mechanical worlds with statistical mechanical chances. For example, let the laws of be those of classical statistical mechanics,38 let be the claim that the world at consists of a small isolated system containing uniform lukewarm water, and let be the claim that the world five minutes after consists of a small isolated system containing an ice cube in hot water. and don’t entail whether is true or not—the laws and the fact that the world consists of uniform lukewarm water doesn’t entail that there will be an ice cube in five minutes, nor does it entail that there won’t be. So can have a middling likelihood even though the laws at are deterministic.

Likewise, the Nomic Likelihood Account doesn’t require non-dynamical chances to assign propositions about the past a chance of or Consider a variant of the example from above, where has classical statistical mechanical laws, asserts that the world at consists of lukewarm water, and asserts that the world five minutes before consists of an ice cube in hot water. is compatible with both the truth and falsity of world consisting of lukewarm water at is compatible with both there being an ice cube five minutes ago and there not being such an ice cube. So can have a middling likelihood, even though is a proposition about the past.39

Desideratum 5. An adequate account should be able to accommodate plausible nomic possibilities.

The Nomic Likelihood Account can accommodate a wide range of plausible nomic possibilities. For example, since the only kind of consequent proposition the account can’t assign nomic likelihoods to are propositions concerning nomic facts (Section 4.1), the account allows nomic likelihoods to be assigned to propositions about particular locations, times, and objects. Thus the account allows for laws about particular locations, times, and objects, like the case of Smith’s garden discussed by Tooley (1977). Likewise, the account can assign nomic likelihoods to triples even if both their consequent and antecedent propositions are false (Section 4.3, axiom 8). Thus it can allow for worlds with uninstantiated laws, like a world where is a law but there are no massive objects. And as we saw in Section 5.4, the account can can make sense of a world with a single chance event, a coin toss, where the chance of heads is and an otherwise identical world where the chance of heads is

7. Worries

Let’s turn to assess some worries one might raise for the Nomic Likelihood Account.

1. The Ontological Worry: The nomic likelihood relation is a fundamental relation defined over propositions and worlds. Characterizing the laws in terms of such a relation commits one to having propositions and possible worlds in one’s ontology.

Reply: First, note that the Nomic Likelihood Account doesn’t require one to understand propositions and worlds in a metaphysically heavyweight way. For example, one might identify propositions with sets of worlds, and adopt a metaphysically lightweight understanding of worlds themselves, like the one advocated by Stalnaker (2011).

Second, although I’ve characterized the nomic likelihood relation as taking propositions and worlds as relata, one could characterize the relation in other ways to avoid these commitments. If one doesn’t like propositions, one could replace the appeal to propositions with an appeal to properties, i.e., the property of being a world at which the relevant proposition is true. Or one could replace the appeal to propositions with an appeal to Chisholm-style states of affairs.40

Likewise, if one doesn’t like worlds, one could replace the appeal to worlds with an appeal to propositions, i.e., the maximally specific propositions describing that possibility. (On this approach, of course, one would not identify propositions with sets of worlds.) Or one could replace the appeal to worlds with an appeal to very detailed properties or states of affairs. These alternative characterizations of the nomic likelihood relation would require only superficial modifications to the details presented in Sections 4 and 5.41

2. The Explanatory Worry: The Nomic Likelihood Account allows for pairs of worlds that, nomic facts aside, are qualitatively identical, and yet which differ with respect to their laws. (For example, the pair of worlds discussed in Section 5.4.) But it’s hard to see how such an account could explain why these worlds differ with respect to their laws, other than simply stipulating that different nomic likelihood relations hold of them. And that seems little better than being a primitivist about laws.42

Reply: The Nomic Likelihood Account is, indeed, similar to primitivist accounts of laws in these respects.43 But I don’t take this to be a problem for the Nomic Likelhood Account. The complaint I raised in Section 2.2 about primitivist accounts like Carroll’s (1994) wasn’t that they took nomic facts to be primitive, or that they couldn’t explain why certain laws obtained without appealing to nomic facts. After all, pretty much any non-Humean account is going to have to appeal to some kind of brute modal or nomic facts. Rather, the complaint was that accounts like Carroll’s don’t provide the kind of detailed framework needed to satisfy desiderata 2 and 3—to yield plausible connections among laws and chances, and to show why chance events deserve the numerical values we assign them. And this is a demerit the Nomic Likelihood Account does not share.

3. The Duplication/Intrinsicality Worry: Following David Lewis (1983), let’s say two worlds are iff there is a bijection between their parts that preserves their fundamental properties and the fundamental relations holding between them. Since the nomic likelihood relation holds between a world and things that aren’t a part of that world (i.e., another world and several propositions), it won’t play a role in our assessment of whether worlds are Indeed, one world can be a of another even if one bears various nomic likelihood relations and the other bears no nomic likelihood relations at all. And since the laws of a world are determined by its nomic likelihood relations, it follows that worlds needn’t have the same laws. This is implausible.

Likewise, following David Lewis (1983), let’s say that a property is iff it never divides duplicates—any two things that are duplicates either both have this property or both fail to have this property.44 It follows that the laws of a world aren’t properties of that world. This is implausible.

Reply: To begin, it’s worth noting that an analogous worry arises for a popular measurement theoretic account of quantitative properties like mass and charge.45 This account posits some fundamental relations over objects corresponding to each quantitative property—e.g., in the case of mass, a mass ordering and a mass concatenation relation—and then use those relations to characterize the quantitative structure of that property. Now, note that the up quark and the charm quark are identical in every way except for their mass. Since on this account these differences of mass are the result of the different mass relations they stand in, it follows that the up quark and the charm quark will be Indeed, given a similar account of other quantitative properties, it will follow that all fundamental particles are This seems implausible. Likewise, it will follow that all of the derivative monadic quantitative properties—e.g., having not be Again, this seems implausible.

There are three ways for the proponent of the Nomic Likelihood Account to reply to the worries raised above. These replies mirror the options available to the proponents of the popular measurement theoretic account of quantitative properties just described. They can (1) challenge the characterizations of duplication and intrinsicality given above, (2) modify the posits the theory makes, or (3) bite the bullet. I won’t discuss the third reply,46 but let’s look at each of the first two replies more carefully.

(1) Let’s start by distinguishing between two kinds of relations. First, there are relations that only hold between things located at the same possible world; call these connecting relations. Spatiotemporal relations are connecting relations—you can’t be five feet from something located at a different possible world. Second, there are relations that can hold between things that are located at different possible worlds; call these non-connecting relations. The more-mass-than relation is a non-connecting relation—we can make sense of something at another possible world having more mass than me.47

Intuitively, qualitative duplicates are perfectly alike “in and of themselves”. That is, duplicates must share their monadic fundamental properties. By contrast, duplicates need not be alike in how they are connected to other things—two copies of a book may differ in their spatiotemporal relations to me and still be duplicates. That is, duplicates can differ with respect to their fundamental connecting relations. But these two truisms leave open the question of whether duplicates should be alike with respect to their non-connecting relations. One thought is that duplicates must also be alike with respect to their fundamental non-connecting relations. So in order for two objects to be duplicates, they must not only share their monadic fundamental properties, they must also stand in the same kinds of fundamental non-connecting relations—e.g., they must bear the more-mass-than relation to the same things.

This suggests an alternative to Lewis’s account of duplication. Let’s say that a pair of objects and are interchangeable with respect to a relation iff So two objects are interchangeable with respect to a relation iff whenever that relation holds between the first object and certain other things, it also holds between the second object and those same other things. Now let’s say that two things and are iff (i) one can form a bijection between parts and parts that preserves the fundamental properties and fundamental relations between them (i.e., they’re and (ii) and are interchangeable with respect to all fundamental non-connecting relations. One might propose that our ordinary notion of duplication is 48

We saw above that given a popular measurement theoretic account of mass, the up quark and the charm quark will be But they won’t be The mass ordering and mass concatenation relations are paradigmatic instances of non-connecting relations, and the up and charm quarks aren’t interchangeable with respect to them. So this alternate account of duplication avoids the unpleasant result that the up and charm quarks are duplicates, in the ordinary sense.

Likewise, on the Nomic Likelihood Account, two otherwise identical worlds with different laws will be But they won’t be The nomic likelihood relation is a non-connecting relation, and these two worlds won’t be interchangeable with respect to it. So given this alternate account of duplication, the proponent of the Nomic Likelihood Account can maintain that worlds with different laws aren’t duplicates, in the ordinary sense.

Turning to intrinsicality, let’s say that a property is iff it doesn’t divide One might propose that our ordinary notion of intrinsicality is 49 If this is correct, then proponents of this popular measurement theoretic account of mass can maintain that monadic quantitative properties (like having are intrinsic in the ordinary sense.

Likewise, proponents of the Nomic Likelihood Account can maintain that the property of being a world where the laws are is intrinsic in the ordinary sense.

(2) Those who would prefer to keep Lewis’s characterizations of duplication and intrinsicality can respond to this objection in a different way.

As we saw above, according to a popular measurement theoretic account of quantitative properties, things that differ solely with respect to their quantitative properties (e.g., the up and charm quarks) will be and the derivative monadic quantitative properties (e.g., having will not not be Mundy (1987) and Eddon (2013a) have argued that we should avoid these difficulties by modifying the account. In particular, instead of just positing one layer of fundamental quantitative properties—fundamental quantitative relations that hold between objects—we should posit two layers of fundamental quantitative properties—fundamental monadic quantitative properties instantiated by objects, and fundamental second-order quantitative relations that hold between these monadic properties. Thus, for example, instead of positing fundamental mass-concatenation and mass-ordering relations over objects, we can posit fundamental monadic mass-properties (e.g., having that hold of objects, and fundamental mass-concatenation and mass-ordering relations over these monadic mass properties. If we do this, then the up and charm quarks won’t be and monadic quantitative properties (like having will be

We can avoid the analogous worries for the Nomic Likelihood Account presented in Sections 3–5 by modifying it in a similar fashion. Namely, instead of positing one layer of fundamental nomic likelihood properties—fundamental nomic likelihood relations over worlds and propositions—we can posit two layers of fundamental nomic likelihood properties—fundamental monadic nomic properties instantiated by worlds, and fundamental second-order nomic likelihood relations that hold between these monadic properties and propositions. In this two-layer picture, the monadic properties will intuitively line up with the complete laws instantiated by that world, And the nomic likelihood relation will replace the appeal to worlds with an appeal to these complete laws, where holds when given if the laws are is at least as nomically likely as given if the laws are 50 If we adopt this two-layer version of the Nomic Likelihood Account, then otherwise identical worlds with different laws won’t be and the property of having complete laws will be as desired.

4. The Holism Worry: Grant that the laws and chances are intrinsic features of the world (cf. worry 3). On the Nomic Likelihood Account, the laws and chances will still be holistic features of the world. This contrasts with a local picture on which, for example, the chance of a coin toss is determined by local features of the coin toss set-up. On this local picture, a local duplicate of this coin toss set-up in another world would have the same chance of landing heads. On the Nomic Likelihood Account, this needn’t be the case.51

Reply: Let’s first get clear on what the distinction between holistic and local pictures of laws and chances amounts to. At a first pass, we can take the disagreement to be about whether there are local regions (regions smaller than a world) such that any duplicate of these regions, in any world, will have the same operative laws and chances. On local pictures there are regions like this: since the laws and chances are local features of regions, and a duplicate of such a region will share its local features, any duplicate of such a region will be governed by the same laws and chances. On holistic pictures, like the one provided by the Nomic Likelihood Account, there aren’t regions like this: since the laws and chances are determined at the world level, and vary from world to world, duplicates of local regions in different worlds generally won’t be governed by the same laws and chances.

I don’t have any strong intuitions about whether the holistic or the local picture is correct.52 So I’m inclined to take this to be a case of spoils to the victor—we should adopt the picture suggested by the account of laws and chances that we independently find most plausible. But I grant that if one is strongly attracted to a local picture of laws and chances, then one has a reason to dislike the Nomic Likelihood Account.

5. The Wrong Grain Worry: The nomic likelihood relation is fine-grained in some respects—for example, it allows us to distinguish between chance propositions that are nomically required and chance propositions that are not. But in other respects it still seems too coarse-grained to capture all of the nomic likelihood facts. For example, suppose a point-like dart is thrown at a one meter interval, with the probability of it hitting any point determined by a bell-curve centered around the point. The nomic likelihood relation will treat the dart landing on the point and the dart landing on the point as on a par But surely the dart landing on the point is nomically more likely than the dart landing on the point.

Reply: It’s true that given the version of the Nomic Likelihood Account developed here, the fundamental nomic likelihood relation won’t be sensitive to such facts. But the proponent of this account can explain (and partially vindicate) these intuitions regarding more fine-grained nomic likelihood facts.53

For example, it’s true that this account will take the dart landing on the point and the dart landing on the point to be on a par But if we consider arbitrarily small neighborhoods surrounding these points (i.e., all points within meters), then the nomic likelihood of landing in the neighborhood of the point will be greater than that of landing in the neighborhood of the point. And we can use this fact to explain the intuition that the dart landing on the point is more likely than it landing on the point.

Likewise, if the probability measure representing the chances is absolutely continuous with respect to some other salient measure, then it follows from the Radon-Nikodym theorem that one can define a probability density with respect to that salient measure (Billingsley 1995). In the dart case described above, the salient measure is length, and we can define the probability density at each point of the one meter interval of the dart landing there. The probability density of the dart landing on the point will be larger than the probability density of the dart landing on the point. And we can use this fact to explain our intuition that the former is more nomically likely.54

8. Conclusion

I’ve suggested (Section 2.1) that an adequate account of laws should satisfy five desiderata: it should (1) provide a unified account of laws and chances, (2) yield plausible relations between laws and chances, (3) explain why we’re justified in assigning numerical values to chance events in the way that we do, (4) allow for both dynamical and non-dynamical chances, and (5) allow for an appropriately expansive range of nomic possibilities. I’ve argued (Section 2.2) that no extant account of laws satisfies these desiderata.

In this paper I’ve developed an account of laws, the Nomic Likelihood Account (sections 3–5), that satisfies all five desiderata (Section 6). On this account, the fundamental nomic property is a nomic likelihood relation. And laws and chances are things that encode facts about the web of nomic likelihood relations. As I’ve noted, there are various challenges one might raise for this account (Section 7). But I think this is ultimately the most attractive account of laws and chances on offer.

A. Some Lemmas Regarding Nomic Likelihood

A.1. Some Key Lemmas

Lemma 1: For all in

Lemma 2: For all in if then

Lemma 3: For all in

If then

If then

Lemma 4: For all in

If then

If then

Lemma 5: iff

Lemma 6: iff

Lemma 7: If then

Lemma 8: If then

Lemma 9: For all in

If and none of the following three conditions hold: (i) (ii) or (iii) then iff

If and none of the following four conditions hold: (i) (ii) (iii) or (iv) then iff

A.2. Proofs

While the lemmas in section A.1 are ordered thematically, the proofs are presented in order of dependence (with later lemmas depending on earlier ones, but not vice versa). Most of these proofs implicitly appeal to axioms like 1 and 2 to discharge the existence assumptions of the other axioms they employ; to avoid needless clutter, I’ll leave such appeals implicit.

• Proof of Lemma 9: (1) The first part of the lemma is a special case of axiom 5, where all of the relevant propositions belong to the same cluster, and (Note that while axiom 5 imposes the condition that which entails that is in lemma 5 doesn’t have such a clause since Thus lemma 5 needs to explicitly add the existence assumption “For all C in

(2) The second part of the lemma follows from the first and the assumption that it’s also not the case that (iv) To see this, suppose that the relevant triples are on a par with the emptyset, and none of conditions (i)–(iv) hold.

First, let’s establish that if then If then and since none of (i)–(iii) hold, the first part of the lemma entails that Furthermore, entails that and since none of (i), (ii) or (iv) hold, the first part of the lemma entails that (The conditions change because and switch places. Conditions (i) and (ii) treat and symmetrically, but condition (iii) does not; condition (iv) is what you get when you swap and in condition (iii).) So if then