Undergraduate students in STEM who are actively engaged in the learning process in the classroom have improved academic performance as compared with students who sit passively through a lecture (Freeman et al., 2014; Prince, 2004; Ruiz-Primo et al., 2011; Theobald et al., 2020). In response, there continue to be a series of high-profile calls for faculty to transform their courses by replacing lecture with innovative and evidence-based teaching practices (Alberts, 2022; American Association for the Advancement of Science, 2011; Anderson et al., 2011; M. M. Cooper et al., 2015; Harvey et al., 2016). However, few faculty have been trained as to what these teaching methods are or how to effectively implement them in their courses (Handelsman et al., 2004; Pfund et al., 2009). In response, numerous faculty development efforts have been created to train and assist faculty as they transform their courses to evidence-based teaching (Borda et al., 2020; Callens et al., 2019; Derting et al., 2016; Herman et al., 2018; McConnell et al., 2020; Viskupic et al., 2019).

Research has succeeded in identifying requirements for inspiring and supporting successful faculty development in STEM and across disciplines at the postsecondary level (Austin, 2011; Henderson et al., 2011; Jacobson & Cole, 2020; Marbach-Ad & Rietschel, 2016). Additionally, Rutz et al. (2012) found that students in courses taught by faculty considered “high-participators” in faculty development had higher academic performance compared with students taught by “low-participators.” Unfortunately, even given these findings, lecture remains the predominant mode of teaching in STEM courses (Freeman et al., 2014; Schuster & Finkelstein, 2006; Stains et al., 2018). Therefore, there is still much to learn about how faculty development efforts can better support faculty’s use of evidence-based teaching (Macaluso et al., 2021). We have taken both a quantitative and qualitative approach to assess the faculty development program we created and implemented, the Consortium for the Advancement of Undergraduate STEM Education (CAUSE). Our previous quantitative analysis of classroom observation data found that many of the 45 faculty in CAUSE were using some evidence-based teaching practices at the beginning of the program. There was a significant increase in the use of practices, both through adoption and more frequent use (Jackson et al., 2022). To gain a deeper understanding of how the CAUSE program best supported this process of change, we will take a qualitative approach through a thematic analysis of interviews with faculty in the program. We now briefly introduce the theoretical and logistical foundations of the CAUSE program, which are more fully described in our previous work (Jackson et al., 2022).

Theoretical Foundation of CAUSE

Instructors are nodes in a complex, interacting network, and faculty development efforts need to maintain a simultaneous focus on three interacting levels: (1) the individual, (2) the department, and (3) the institution. Sustainable change requires that we focus on building support networks in the larger academic ecosystem to foster institutional changes in teaching practices.

Previous research on the effectiveness of different faculty development efforts found two early approaches to be clearly inadequate: top-down demands from administrators and disseminating “best curriculums” (Ebert-May et al., 2011; Henderson et al., 2011; Turpen et al., 2016). Specific barriers faculty face when embracing effective teaching methods include the lack of familiarity with and opportunity to learn new methods, lack of support when changing courses, and lack of recognition for making successful changes (Dancy & Henderson, 2008). To address these issues, Henderson et al. (2011) recommended that professional development programs focus on changing faculty teaching beliefs and providing long-term support and recognize the university as a complex system in which faculty operate.

CAUSE operationalized the theoretical foundation of faculty change. CAUSE both implemented the EPIC model of adoption of new teaching practices—as CAUSE involves exposure, persuasion, identification, commitment, and implementation (Aragón et al., 2018)—and specifically addressed the three major conclusions of Henderson et al.’s (2011) work. The CAUSE program involved a two-year commitment consisting of two phases. The exploration phase increased awareness of evidence-based teaching practices, while the implementation phase provided practice and feedback on changes to teaching. To address the complexity of the university system in which each faculty member is situated, we incorporated a systems approach (Austin, 2011), which recognizes structural differences within an organization, the need for human resources, and political actions. We addressed the structural features of the university by acknowledging the cultural differences between departments and recruiting faculty leads from each department who could navigate those differences. To leverage human resources, we purposely created a community of support and provided objective assessment of teaching practices for each participant to counter possible departmental politics.

The CAUSE Program

The program was conducted at a large R1 institution and was funded by the National Science Foundation (NSF) for three years. Each year we recruited teams of two to three faculty from each of seven STEM departments (biology, chemistry, computer science, mathematics, physics, psychology, and public health) to become CAUSE Fellows. Over the course of the three years, we recruited a total of 45 faculty. Faculty were recruited by the CAUSE Fellow acting as the departmental lead in that department. The departmental lead selected faculty based on their openness to try new teaching methods. The CAUSE program recruited primarily teaching-track faculty, as they teach large introductory STEM courses and have the opportunity to impact the learning of the greatest number of undergraduates. Faculty who chose to participate made a two-year commitment to the program.

The exploration phase consisted of biweekly meetings facilitated by a faculty member who was engaged in biology education research and was experienced in using evidence-based teaching practices. Though there are a plethora of terms associated with active learning teaching methods (Driessen et al., 2020; Lombardi et al., 2021), we defined evidence-based teaching practices as those identified and reported in our earlier work that created the classroom observation tool, the Practical Observation Rubric To Assess Active Learning (PORTAAL; Eddy et al., 2015). The PORTAAL tool was the result of an extensive literature review of papers in the cognitive sciences, learning sciences, and discipline-based education research fields that presented empirical evidence of a defined set of in-class teaching practices that significantly improved student learning. This definition is almost identical to that presented by Jacobson and Cole (2020). Though evidence-based teaching practices may encompass both in- and out-of-classroom activities, PORTAAL practices are confined to in-class activities. CAUSE Fellows read and discussed many of these papers, and each practice was described. Subsequently, Fellows were encouraged to select a few practices they felt comfortable using and were appropriate for their courses. Meetings during the implementation phase focused on group discussions of successes and how to deal with barriers as CAUSE Fellows incorporated the new practices. To provide objective feedback for Fellows on their teaching practices, class recordings were coded each term using PORTAAL (Eddy et al., 2015). An aggregate PORTAAL report for all Fellows and individual PORTAAL reports were generated and distributed at the end of each quarter.

In previous research, we analyzed the change in implementation of 14 PORTAAL practices between the first time a Fellow taught a course while in CAUSE and the last time they taught that same course. We found that the Fellows increased the implementation of three practices while decreasing the implementation of two practices (Jackson et al., 2022). Given these quantitative results, we sought to gain a deeper understanding of Fellows’ experiences in the CAUSE program and their use of evidence-based teaching practices. Therefore, we conducted a series of interviews with a subset of CAUSE Fellows. A qualitative thematic analysis of these interviews allowed us to address the following research questions:

Which aspects of CAUSE made it a useful program for participants?

What factors do Fellows report influenced their implementation of evidence-based teaching practices?

Methods

Faculty Interview Data

To understand which aspects of the CAUSE program may have helped faculty begin the process of changing how they teach, we selected a subset of faculty (n = 6) from the 45 Fellows in the program to interview. Only one faculty member per department was interviewed, but not all departments were represented. Human subjects research was conducted with the approval of the Institutional Review Board at the University of Washington (STUDY00002830).

Interviewees were selected based on four criteria: (1) they had completed at least one quarter of their implementation year, (2) they had PORTAAL data for at least two instances of teaching the same course, (3) they consistently attended CAUSE meetings and showed engagement in the program, and (4) they showed increasing trends in multiple PORTAAL practices over time. Pseudonyms, years of teaching experience, and approximate class size for each faculty member can be found in Table 1. All Fellows interviewed were in the non-tenure teaching track, as these faculty made up the majority of faculty in CAUSE. Four of the Fellows we interviewed identified as women, and two identified as men. We have chosen to use gender neutral names and pronouns throughout to preserve anonymity. The pseudonyms do not reflect the race or ethnicity of the Fellow.

Information About Interviewed Faculty

Pseudonym |

Years teaching |

Approximate class size (students) |

|---|---|---|

Amari |

10+ |

500 |

Cory |

5 |

300 |

Elliot |

1 |

200 |

Micah |

10+ |

300 |

Peyton |

10+ |

200 |

Sasha |

7 |

400 |

Note. Years teaching is the total number of years in a faculty position at this R1 or another college or university, based on the time at which the interview was conducted.

The interviews were voluntary for faculty. Interview questions were written and revised based on discussions among the research team. Interviews were conducted by the same researcher and took 30 to 55 minutes to complete. Audio recordings were collected with verbal consent of the participants, and recordings were transcribed.

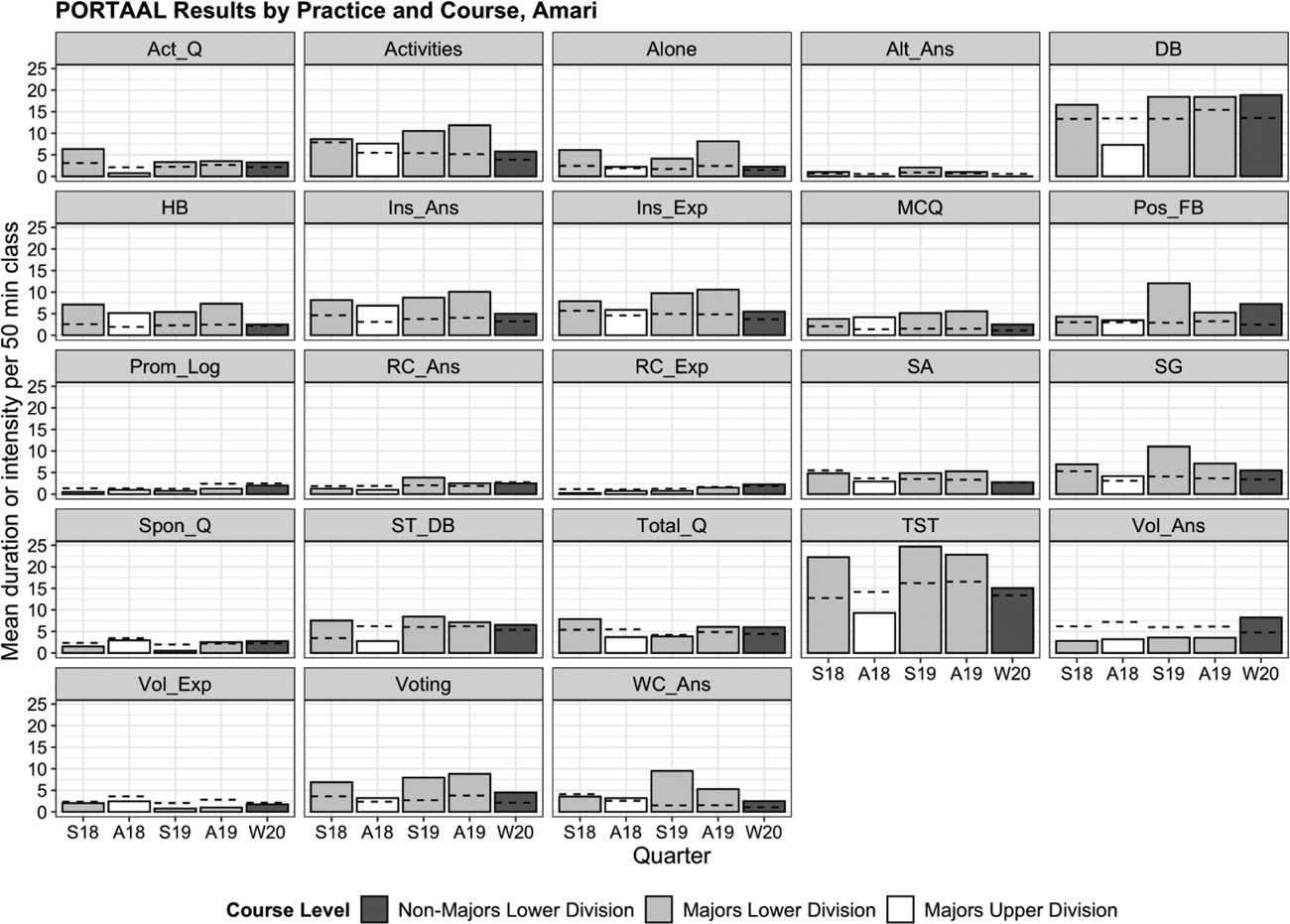

In the interviews, faculty discussed their teaching background, motivation for enrolling in CAUSE, and experience in the program. As prior work has shown that providing faculty with data about the topic of the interview can stimulate more productive reflections (Kwasnicka et al., 2015; Wood et al., 2022), we provided each faculty member with a figure showing their PORTAAL data across the quarters (see Figure 1). The data for these figures had been collected and reported in our previous work (Jackson et al., 2022). Briefly, two researchers viewed video recordings of four different class sessions during each academic term and coded the presence and duration of each PORTAAL practice observed. Data for each PORTAAL practice were averaged, standardized to a 50-minute class session, and summarized in an end-of-term report for each Fellow. For the figures we presented during the interviews, we compiled the quarterly data to show values across the multiple academic terms in which the Fellow taught the courses. The general questions asked to each interviewee are found in Appendix A. Though these questions scaffolded the discussion, faculty were asked various follow-up questions depending on their answers.

Note. Average values for 23 PORTAAL practices collected across four different class sessions for each of three different courses taught during CAUSE. Long names for each PORTAAL practice code may be found in Appendix B. More elaborate descriptions of each code can be found in Jackson et al. (2022). Dotted lines represent average values for each practice for each quarter based on all CAUSE Fellows. X-axis labels are quarters during CAUSE: “S” referring to spring, “A” to autumn, and “W” to winter.

Thematic Analysis

After transcription, we conducted a thematic analysis of the interviews using the protocol described by Braun and Clarke (2006). We used a primarily deductive approach to thematic analysis, as our interview questions and subsequent themes were intended to answer the research questions we formulated prior to analysis. We did not generate possible codes prior to conducting the interviews or reading the transcripts, but our coding was influenced by the research questions. Two researchers began by reading each transcript and individually creating a list of codes in Dedoose (version 9.0.46). After initial coding, the researchers met to reconcile their codes, revise the use and wording of codes, and discuss how the codes could be organized into themes that would answer the research questions. Each theme was intended to capture a distinct idea about at least one of the research questions. Each code was then organized under one theme. One researcher then revised the coding based on the new list of codes and themes. Together, the researchers revised the themes and codes a final time after the second round of coding, and code presence and application tables were exported from Dedoose.

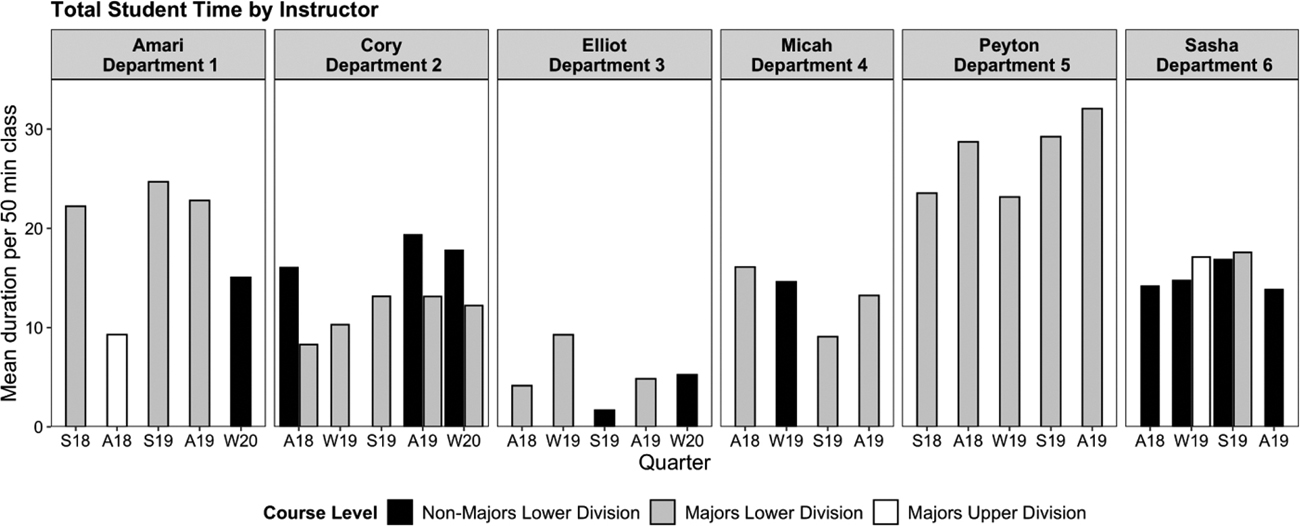

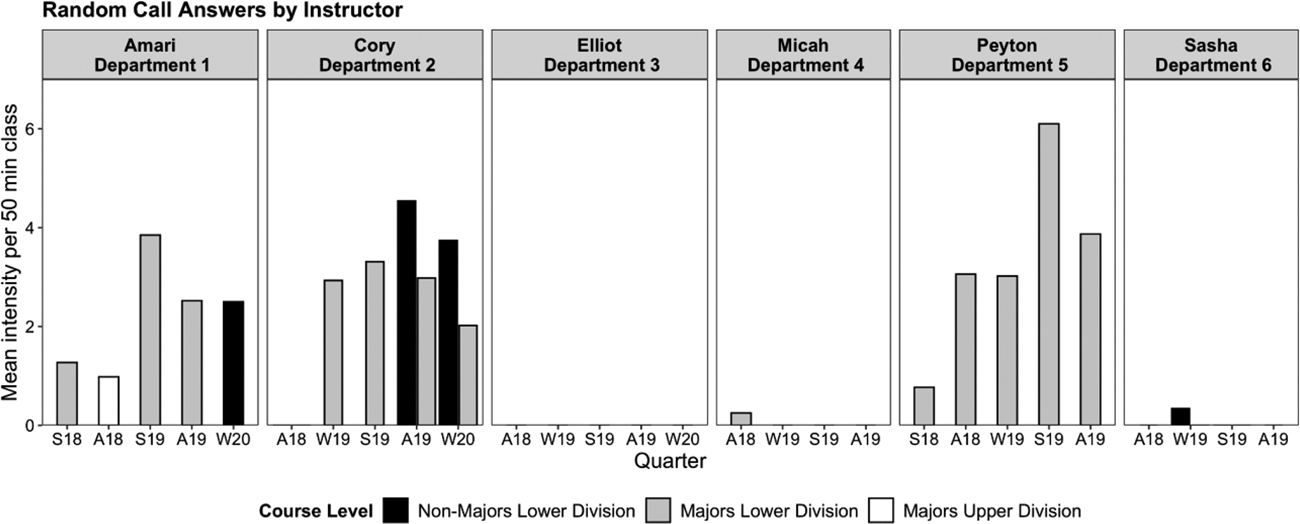

When reporting results of this thematic analysis, we will at times present figures that indicate the variation in implementation of a specific PORTAAL practice among the six Fellows interviewed for this study. The data used to generate these figures were collected and analyzed in the aggregate for the 45 CAUSE Fellows in the program. We have extracted the data for all the courses taught by the six Fellows interviewed for the current study and generated bar graphs.

Results

We identified multiple codes to characterize interview data and organized the codes into five themes (Table 2). For all but one theme (respect for students underlies implementation), all six interviewees discussed at least one of the codes under each theme. To answer our research questions, we will describe overall themes from the interviews and present excerpts to support the themes. Excerpts from faculty may be lightly edited for clarity and to preserve anonymity.

Themes and Codes From Faculty Interviews

Theme |

Code |

Number of Fellows who discussed code |

|---|---|---|

|

CAUSE social value |

Enrolled because of colleague |

3 |

Learned about teaching in other departments |

6 |

|

Support to know that others were changing teaching |

2 |

|

Valued meeting other faculty across departments |

4 |

|

CAUSE educational value |

Feedback was valuable |

3 |

Learned about specific practice from colleague |

2 |

|

Provided literature or evidence for teaching practices |

5 |

|

Provided structured time for improving teaching |

1 |

|

Context influences implementation |

Adapted a strategy for their course |

4 |

Classroom layout |

2 |

|

Classroom technology allowed for more active practices |

5 |

|

Curriculum is fixed or inherited |

2 |

|

Random call |

2 |

|

Students are used to active learning |

1 |

|

Variation in implementation among courses |

5 |

|

Implementation is related to experience or motivation |

Active learning is fun for instructor |

3 |

Active learning led to insights in student thinking |

3 |

|

Being unsatisfied with teaching led to change |

2 |

|

Challenge implementing a teaching practice |

6 |

|

Changing teaching incrementally |

4 |

|

Instructor is reflective about teaching |

5 |

|

Practices used before CAUSE |

5 |

|

Previous participation in faculty development |

6 |

|

Random call can be challenging for the instructor |

5 |

|

Solution to implementation challenge |

2 |

|

Time to revise teaching materials is a barrier |

2 |

|

Respect for students underlies implementation |

Balancing using new strategies with the benefit it would provide |

2 |

Building student community |

1 |

|

Instructor implies respect for students |

5 |

|

Student hesitancy to active learning |

3 |

|

Students hearing the answer from their peers may improve confidence |

4 |

RQ1 Which aspects of CAUSE made it a useful program for participants?

Two themes identified from the interviews addressed aspects of CAUSE that made it useful for the Fellows: the social value and the educational value (see Table 2).

CAUSE Social Value

Faculty explained that CAUSE fostered cross-disciplinary conversations about teaching. Multiple interviewees noted they likely would not have met and discussed teaching with faculty from other STEM departments if they had not participated in the CAUSE program. Some Fellows also explained they were encouraged to enroll in CAUSE because of a colleague.

Elliot said it was helpful to be in a cohort of instructors that met regularly, because it allowed the Fellows to build a community across departments and learn about the varied approaches to using active learning. This idea was echoed by other instructors. For example, Cory said,

I’ve really appreciated having the time to sit down and say, the next hour is devoted to thinking about how we can use clicker questions in class, or thinking about how we approach random call in class, or thinking about how we might achieve this, that, or the other in a classroom when you have 300 students versus when you have 50 students. What challenges are there? Or not? Those discussions make you a better teacher. In the same way [as] going to a conference—maybe you’ll learn something useful and maybe you won’t, but it kinda gets you re-fired up to be a good teacher. It’s motivating.

CAUSE Educational Value

When we interviewed the Fellows, they each were implementing evidence-based teaching practices at varied levels. However, the majority explained that CAUSE provided resources to support them as they adopted new teaching practices. For example, five out of the six Fellows interviewed expressed that CAUSE provided them with evidence for the impact of these teaching strategies on student learning and with guidance on how to implement them. Fellows indicated this reduced the amount of time needed to search the literature and educate themselves. Elliot, who was newer to teaching, said,

Peyton and Sasha were using some evidence-based teaching practices prior to CAUSE but noted that the program reminded them why it was important to implement evidence-based practices and how to coach other instructors on using these methods effectively.I think the things that have helped me the most are the things about . . . the practice of how do you run active learning exercises in a classroom effectively . . . . You need to prompt them for logic. Or you need to like, give them time to think about it by themselves first. I think those mechanics, like to understand why those mechanics are helpful. Like reading the research papers about that has been super helpful.

Additionally, some interviewees explained that the most helpful part of being in CAUSE was receiving objective and timely feedback on their teaching. For example, Sasha said,

This quarterly teaching feedback, similar to what is shown in Figure 1, was a hallmark of the CAUSE program.What ends up being most valuable to me I think is like the reports of my own data, particularly as I see where I stand with faculty colleagues. And also brainstorming around ways to maybe improve some of the [practices] we talk about.

RQ2 Which factors influenced Fellows’ implementation of evidence-based teaching practices?

We found three themes related to factors that influenced Fellows’ implementation of evidence-based teaching practices (see Table 2). One was how the extrinsic factor of class context influenced implementation, while two related to how the intrinsic factors of Fellows’ motivation, prior experience with these teaching practices, and their respect for students as learners impacted implementation.

Context Influences Implementation

Fellows explained that their implementation of evidence-based teaching practices depended on the context of their classrooms. For example, some of the most common codes applied in this theme included the variation in the practices they used depending on the course they were teaching, how they adapted practices to better meet the unique needs of their course or students, and the benefits of classroom technology as they shifted to more active learning practices. Two instructors, Elliot and Micah, talked specifically about the balance they were attempting to achieve between using more active learning practices during class while meeting the strict schedule of a fixed curriculum. These instructors felt there was little time to spare for implementing more clicker questions or small-group discussions because there was so much material to cover.

A noteworthy example of the variation in implementation of evidence-based teaching practices that coincided with course context can be seen in two courses taught by the same instructor. Amari taught three different courses while in CAUSE, and the amount of total student time as measured by the total minutes students were thinking alone, working in groups, or answering questions varied greatly across these three courses (Figure 2). When prompted in the interview about these observed differences, Amari said,

There’s a reason for that. I inherited a lot of the [teaching materials] that I use in [the majors lower division course] from . . . other folks who teach the course. The ones in [majors upper division course] I made up all myself . . . . It was always from the perspective that the students learn more by doing than by hearing me yack, and yet . . . I don’t carry out my own philosophy as effectively in that class. I do more standing up there yakking than I do in [the majors lower division course] where I started with materials from the people who developed the whole active learning approach to that class.

Note. X-axis labels are quarters during CAUSE: “S” referring to spring, “A” to autumn, and “W” to winter. Data displayed in this figure were collected as part of the quantitative study reported in our previous publication and was reported in the aggregate for all CAUSE Fellows (Jackson et al., 2022). Data for the six interviewed Fellows were extracted from that data set to generate bar graphs for each specific Fellow.

Another factor influencing Fellows’ implementation of evidence-based teaching practices was trying to meet the unique aspects of their course by adapting practices. Elliot explained they were trying to adapt the use of volunteer answers to hear from more women in their class. By doing this, they were able to have more students and more women answering questions, which likely increased the total amount of student engagement in their classes. Elliot said,

Though they did not feel comfortable using random calling, Elliot was finding ways to hear from more students in their classroom, especially those underrepresented in their department.I’ve also been trying to [call on] someone who hasn’t shared in class before . . . . I think there’s a middle ground, before doing the full random list of students . . . I actually try to bias towards women, if they’re raising their hand. Because most of the class is men. And I don’t want it to be the case that only men are answering these questions. So when I ask for volunteers in the back, I try to pay particular attention if there’s a woman in the back that raises her hand, so that she can say something and then it sounds like there are more women in the class.

Micah was newer to using evidence-based teaching practices and wanted to adapt them for their classes but had not yet balanced the level of student engagement with the constraints of the course curriculum. Micah said,

Oftentimes, I’ll have a period when I have figured out which questions to ask, and they’re much more interactive. Then either because I fall behind and we need to catch up, I mean, we’re on a common final exam so I can’t afford to skip topics. If I feel like the students are struggling with something or we’re running out of time, [the questions] will usually be interactive and then never mind . . . we’re going to do it the old-fashioned way.

Implementation Is Related to Experience or Motivation

All of the Fellows interviewed had previously participated in some professional development related to teaching and were using evidence-based practices in varying amounts. However, Fellows also noted they encountered hesitancy or logistical issues while implementing some practices. The most prominent practice that fell into this category was using random calling. Five out of the six interviewees alluded to their discomfort or logistical issues associated with using random calling.

Sasha also elaborated on how their teaching experience and philosophy influenced the use of evidence-based teaching practices in their classes. Sasha said,

Cory taught the same course each quarter during CAUSE and therefore had many opportunities to change their course. They were reflective and motivated about how their teaching could change and improve over time. They expressed that it may take multiple iterations of teaching the same course to devise an effective plan for using active learning. Cory explained,I think I was doing a bunch of [evidence-based teaching prior to CAUSE]. My approach to teaching in general is very kind of centered on this idea of students as active participants in the class, and our class is kind of this collaborative learning community. So very naturally without realizing, it was evidence-based when I first started teaching. I kind of slipped into thinking of classes like a dialogue between the students and me, and between the students with each other. I think that people tend to care more about learning if they feel like they have some degree of ability to self-direct. So I think in all my classes, I tried to make it, you know, even if students aren’t directing the curriculum, which they are [doing] sometimes. But they definitely get to direct where the conversation goes that day, and I’m just kind of like the facilitator for that.

Some Fellows explained that evidence-based teaching was also a more enjoyable way of teaching as it provided more opportunities to interact with their students. This can result in both students and instructors being more motivated to engage in the learning process. For example, Sasha said,You’re always like, “Next time I’m gonna [teach] it this other way.” And then you do it a little bit differently and you’re like, “I liked part of that better. But part of that was worse.” I get to the end of class and I’m like, “I wanna do this different next time, or next time, I’m gonna add this, or I need to make sure that I drive this point home on this slide so that they’re already there when we get two slides later.” That’s sort of that future plan, too, of someday I’m gonna have a real plan figured out on how to do that really well. But I’m not there yet.

Yeah, the vast majority of [my students] are really into [active learning]. There’s some who just want, like, the very structured lecture only. And I tell them at the very beginning of the class, “If that’s what you want, pick a different class.” [Active learning is] more fun for me. It’s more fun for the majority of students.

Respect for Students Underlies Implementation

Based on their answers to interview questions, five of the six Fellows exemplified respect for their students. An excerpt from Micah shows how instructors felt that students should be acknowledged as active participants and contributors to the class. Micah said,

Another way faculty expressed respect for their students was their concern that evidence-based teaching may induce anxiety in some students or lead to cognitive overload. A few of the Fellows talked specifically about weighing the addition of more activities or assignments with the benefits they could provide for the students. For example, Cory said,Most of the time when I ask for volunteer answers, it’s so that the students get that extra bit of time to think about what just happened and to hear it more in their own words rather than mine. I think that that’s a useful exercise for them. I think there’s maybe an aspect sometimes in [this subject] where some students think that it’s something that’s being passed onto them from heaven. I try to emphasize that [this subject] is something that we all can do . . . . So, when they speak up and tell me the answer, I think it makes it more obvious that it’s something that, I’m hoping at least, they can do and not something that their teachers tell them [how to do].

I think that there’s something to be said for cognitive load on the students or just overall load on the students. Some of them are working so many jobs and they have siblings to take care of and they’ve got commutes that are awful . . . and so, adding one more [homework assignment] . . . I wanna make sure that it’s really going to be a benefit to them as opposed to just my hair brained idea.

Case Study: Random Call

In the preceding sections we have used excerpts from multiple Fellows as examples for each theme. We will now show how all five themes were found in one Fellow’s remarks about the most commonly discussed and controversial evidence-based teaching practice: randomly calling on students to give answers during class. The data in the figure below (Figure 3) illustrate the implementation differences for this practice across the Fellows.

Interviewees noted that CAUSE provided ample evidence to support the claim that using random call could create a more equitable classroom. As many of the Fellows in the program had been using this practice for a considerable period of time, they not only encouraged others to adopt this practice but also provided anecdotal information about how best to implement it (CAUSE educational value). These Fellows acted as mentors to instructors who were newer to using this and other practices (CAUSE social value). Three interviewees specifically noted they do not use random call in their classrooms. Two of these instructors explained that it was because they felt it might create anxiety for students (respect for students). In an effort to find a way to use random call that minimizes students’ and instructors’ anxiety, CAUSE Fellows suggested using a variation of the practice: group random call. Group random call relies on students discussing in groups prior to the report-out phase of the activity and allows students to be called by group name rather than by an individual student’s name (Knight et al., 2016). More recently, a new method of random call, termed “warm calling”—in which students are given an opportunity to discuss the answer with students they are sitting near prior to being called on by the instructor—has been introduced as yet another option for instructors (Metzger & Via, 2022).

We have detailed an account below from a Fellow who first struggled to use random call, adapted it to serve their students better, and became an avid supporter of the practice within CAUSE and within their department.

At the start of their first quarter in CAUSE, Peyton was implementing evidence-based teaching practices, but they seldom used random call. When asked about this, Peyton said,

By adapting the random call method to meet the comfort level of their students, Peyton was able to preserve the random selection of student respondents while potentially reducing the anxiety of being called by name. Peyton also explained,I can tell you when I first did random calling, it was a bit of a disaster . . . . The general fear [of the subject], I think, made it difficult to do random calling because they were nervous, to begin with . . . . So, when I first tried, I random called by their names, but I had plenty of students. I couldn’t remember who’s who. When I random called by name, they would just pretend that they [were] not there. I had a lot of ignored calls and it wasn’t working. So, I had to stop that quarter . . . . But then I tried to do different things [with] random calls. This time I had a list of seat numbers and I would just walk up to that seat number and then personally ask, “So, what did you discuss?” Then, in that case, they can’t pretend that they are not there. Since then, I’ve been using that method and it works beautifully. It’s great.

Using random call also allowed Peyton to better understand the types of reasoning their students were using and why they were making mistakes. Peyton had such a positive experience using random call that they have been encouraging other instructors in their department to use it. Peyton said,The random call has been eye-opening for me really. So, when I used to ask for volunteers more often, often people who volunteer will have the right answer and the right logic. They’re confident enough to do that. But when I random call, often they say, “Well, I wasn’t sure,” and I say, “That’s okay. We’re here to discuss.” Then they’ll give me sometimes very interesting logic which is still correct and I never thought about it [that way]. I was like, “Wow, that’s the first time I’ve heard this logic and it’s great.” I love that aspect . . . . And I think the students enjoy that because not all students get the right logic.

Maybe [CAUSE] was not so much of a learning experience for me, but moral support for me. Because I think I am one of only two people in [my] department who are consistently doing random calling, maybe three. Some instructors are trying to implement it. Try it, and then it didn’t work, and then they stopped doing it. With the backing of CAUSE, I’m giving them stronger advice. [I’m telling them,] “It’s not just from me . . . [Random calling] is a really good thing.” And then I can talk about other people in the other departments doing it.

Discussion

Numerous articles have documented the many barriers faculty face when attempting to transform their teaching from traditional lecture to teaching methods that create greater student engagement (Apkarian et al., 2021; Austin, 2011; Brownell & Tanner, 2012; Carbone et al., 2019; Denaro et al., 2022; Henderson & Dancy, 2007; Kim et al., 2019; Shadle et al., 2017). Our thematic analysis of interviews further supports the existence of these barriers for today’s instructors. The six CAUSE Fellows indicated that the context and constraints of the course they taught, as well as their prior experience with certain teaching practices, were influential in their decisions to adopt and implement new teaching practices. Though multiple barriers to change existed for the Fellows, previous research indicates instructors’ perceived support in the change process is more impactful than the perceived barriers (Bathgate et al., 2019; McConnell et al., 2020). In fact, our thematic analysis reinforces this conclusion, as the Fellows identified the social support and educational resources the program provided as key to the program being useful for them. We also found that the Fellows expressed a personal motivation to change and a high degree of respect for their students as capable and valued partners in the learning process. We therefore frame our discussion as an asset rather than a deficit model of faculty change. We will organize our discussion to align with our research questions.

Useful Aspects of CAUSE

Our thematic analysis revealed that these six CAUSE Fellows greatly valued the strong and broad social support offered by the program. As a systems approach for change (Austin, 2011; DeMarais et al., 2022) was a key component of our program, CAUSE annually recruited cohorts of two to three faculty from seven different STEM departments over the three years of the program. This larger community afforded Fellows the opportunity to meet faculty outside their department and hear how other instructors had successfully implemented evidence-based teaching practices in their STEM classrooms. Multiple instructors explained they appreciated not having to “reinvent the wheel” of how to use these new teaching practices and could instead learn from more experienced CAUSE Fellows. Vygotsky’s (1978) sociocultural theory of learning states learning stems from social interaction, as knowledge is constructed by interactions with others, which in turn leads to internalization of that knowledge. The sociocultural theory of learning applies to any learner whether they are students in a class or faculty in professional development programs.

The Fellows appreciated the educational support the program offered in providing a curated and concise presentation of the primary literature on the impact of evidence-based teaching on student academic performance. As all CAUSE Fellows held doctorate degrees in STEM fields, they had been trained to make decisions based on empirical evidence and knew the value of results in peer-reviewed literature. Interestingly, Bouwma-Gearhart (2012) found that many faculty took part in faculty development efforts because they wanted to bring their teaching identities in better alignment with their research background. Therefore, it is possible that providing a rich list of citations for the value of evidence-based teaching may have supported these Fellows’ implementation of teaching practices.

However, our finding is in contrast to Andrews and Lemons’s (2015) results from their qualitative analysis of interviews of faculty engaged in adoption of a case study approach to teaching. Andrews and Lemons found that faculty’s personal experience with active learning was more influential than the provided empirical evidence for motivating change, which has been echoed in the literature (Jacobson & Cole, 2020). We interviewed a small sample of faculty specifically about the support that the CAUSE program provided. One of the hallmarks of the program was providing primary literature about evidence-based teaching. Therefore, it is possible that when Fellows were interviewed, they were likely to report that the evidence from the literature was helpful. It is also possible that these faculty were motivated by both empirical evidence and their own experiences with evidence-based teaching.

One of the Fellows, Peyton, explained that they were able to use evidence from the CAUSE meetings to support their argument for other instructors in their department to use random call. Peyton’s story supports the premise and findings from others, which suggest participants in faculty development programs can themselves become change agents within their departments and can even provide the impetus, support, and guidance for other departments on campus that are slower to implement evidence-based teaching practices (Andrews et al., 2016; Kezar, 2014; Lane et al., 2019; McConnell et al., 2020).

Providing objective feedback on classroom practices rather than instructor self-report has been noted to be key to many professional development programs in STEM departments (Brickman et al., 2016; Gormally et al., 2014; Hjelmstad et al., 2018; Manduca et al., 2017). CAUSE provided quantitative feedback on PORTAAL practices Fellows were using in their classrooms at the end of each term. The quarterly PORTAAL reports allowed faculty to monitor how their values for each PORTAAL practice compared with the range of values of their colleagues and provided opportunities for self-reflection and motivation for change. Two of the instructors interviewed stated this was one of the most beneficial parts of CAUSE.

Factors That Influenced Implementation of Teaching Practices

In agreement with results from professional development efforts of others, we found extrinsic context influences the level of implementation of new teaching methods (Apkarian et al., 2021; Shadle et al., 2017; Sturtevant & Wheeler, 2019). Fellows noted that classroom technologies such as Poll Everywhere facilitated greater student engagement with course material. However, they also identified many contextual barriers that lead to greater variation in the level and types of evidence-based teaching they implemented across the different courses they taught (Yik et al., 2022). These contexts ranged from the physical layout of the room (Metzger & Langley, 2020), to course subject matter, to the students’ previous experience and comfort with various evidence-based teaching methods.

Intrinsic factors related to prior experience with these teaching methods or their motivation also influenced Fellows’ decisions to change their teaching. All the Fellows we interviewed had previously participated in some type of teaching professional development. This finding is in agreement with results of Yik et al. (2022), who surveyed over 2,000 instructors from 749 institutions and found instructors who had participated in teaching-related faculty programs spent considerably less time lecturing and more time in active learning teaching methods. The majority of the Fellows also brought up that they were reflecting on their teaching practices and changing the way they used evidence-based practices incrementally over time. We were encouraged to find Fellows were reflecting on their teaching, as previous research has found reflection can be linked to changed beliefs and practices in the classroom (Wlodarsky, 2005). In agreement with other findings (Bathgate et al., 2019), some Fellows also discussed how they found active learning to be a more enjoyable way to teach. These findings suggest personal factors may have had a major impact on Fellows’ decisions to adopt and implement new teaching practices during CAUSE.

Another theme evident from the interviews was interviewees’ obvious respect for students as valued partners in the learning process. We interpret these views as the Fellows holding a growth mindset toward their students’ ability to learn. Growth mindset indicates all students can learn if given the proper support and practice (Dweck, 1999). Work by Canning et al. (2019) found faculty who held a growth mindset about their students’ academic abilities had academic achievement gaps half the size of faculty who held a fixed mindset. Many suggest achievement gaps indicate some students are not as capable as others, but Canning et al.’s work indicates that how students are viewed as learners may be equally influential. It was encouraging to see that Fellows were attentive to how the new teaching methods impacted their students’ learning behaviors in and out of the classroom and adjusted their teaching methods accordingly. This was particularly obvious in how Fellows implemented their use of random call. Although this method may be stressful for some students (K. M. Cooper et al., 2018), hearing all voices is an equity issue, so Fellows found alternative ways to use random call (Metzger & Via, 2022; Waugh & Andrews, 2020). We came to realize that these CAUSE Fellows had switched from seeing themselves as the “sage on the stage” to becoming the “the guide on the side” to facilitate rather than dictate student learning.

Limitations

Findings from faculty interviews are subject to a selection bias. The individuals who participated in interviews were selected by researchers based on their engagement in CAUSE and their comfort with discussing their teaching. Our sample of interviewees could have had a more positive experience in the CAUSE program and this could have influenced their responses to our interview questions. Therefore, the themes reflected in these interviews may not be representative of all the faculty in CAUSE. We also found that the interviewees all reported using some level of evidence-based teaching prior to CAUSE and had some previous experience with faculty development.

Implications for STEM Professional Development Programs

In response to the numerous national calls to transform STEM teaching from traditional lecture to evidence-based practices, many groups have created and assessed faculty development programs to support this change. We designed CAUSE to operationalize current best practices resulting from these efforts. Below we summarize our results so that future professional development programs may take into consideration the value of providing the positive aspects of CAUSE and acknowledge the barriers that continue to exist for faculty as they begin the process of change.

Our thematic analysis of the interviews of six CAUSE Fellows contributes to the growing body of research designed to identify the factors that support faculty’s decisions to transform their teaching. Our analysis indicated that both extrinsic and intrinsic factors influenced their implementation of evidence-based teaching practices. The Fellows noted the social interaction with Fellows from their department and across STEM departments built a large and strong support network that provided encouragement for their sustained efforts and acknowledgment of their successes. Fellows valued that CAUSE promoted teaching methods that had empirical support for enhancing students’ academic performance and that CAUSE provided quantitative and objective feedback on their teaching methods over time. Many factors influenced Fellows’ implementation of evidence-based practices, including the course level and content, students’ comfort with the disciplinary material, Fellows’ experience using teaching methods, and Fellows’ personal motivation to change.

Underlying the discussions during the interviews, Fellows expressed a consistent and a strong sense of respect for their students. These Fellows were aware some students may be unfamiliar and therefore uncomfortable with evidence-based teaching, so implementation would have to be tempered. Fellows were also aware they needed to balance the demands the new teaching strategies would place on the students’ time with the benefits they would provide but respected their students as willing and able learners. Given the increased awareness of the impact faculty’s mindset has on student learning (Canning et al., 2019) and recent evidence that inclusive teaching practices that emphasize treating students with dignity and respect may also close achievement gaps in STEM for students from marginalized backgrounds (Theobald et al., 2020), we were encouraged to find “respect for students” as a persistent theme in the interviews.

We found that change is a process that is complex and as varied as the individuals engaging in change. To be successful, faculty development programs need to provide the necessary social and educational support for change and recognize each individual brings their own teaching experience, course context, and personal motivations to the process of change.

Biographies

Mallory A. Jackson is a graduate of the Department of Psychology and the Department of Biology at the University of Washington, Seattle. She is a former Research Scientist in the Biology Education Research Group at the University of Washington, Seattle.

Mary Pat Wenderoth is a Teaching Professor Emerita in the Department of Biology at the University of Washington, Seattle. She is a member of the Biology Education Research Group. She conducts research in the areas of student reasoning in physiology and creating effective faculty development efforts to implement evidence-based teaching practices in STEM classrooms. She is also a co-founder of the Society for the Advancement of Biology Education Research (SABER).

Acknowledgments

We would like to thank Jennifer H. Doherty for her guidance on our interview protocol and analysis. We also thank the CAUSE faculty participants who graciously shared their time to be interviewed.

Conflict of Interest Statement

The authors have no conflict of interest.

References

Alberts, B. (2022). Why science education is more important than most scientists think. FEBS Letters, 596(2), 149–159. https://doi.org/10.1002/1873-3468.14272https://doi.org/10.1002/1873-3468.14272

American Association for the Advancement of Science. (2011). Vision and change in undergraduate biology education: A call to action. AAAS.

Anderson, W. A., Banerjee, U., Drennan, C. L., Elgin, S. C. R., Epstein, I. R., Handelsman, J., Hatfull, G. F., Losick, R., O’Dowd, D. K., Olivera, B. M., Strobel, S. A., Walker, G. C., & Warner, I. M. (2011). Changing the culture of science education at research universities. Science, 331(6014), 152–153. https://doi.org/10.1126/science.1198280https://doi.org/10.1126/science.1198280

Andrews, T. C., Conaway, E. P., Zhao, J., & Dolan, E. L. (2016). Colleagues as change agents: How department networks and opinion leaders influence teaching at a single research university. CBE—Life Sciences Education, 15(2), Article 15. https://doi.org/10.1187/cbe.15-08-0170https://doi.org/10.1187/cbe.15-08-0170

Andrews, T. C., & Lemons, P. P. (2015). It’s personal: Biology instructors prioritize personal evidence over empirical evidence in teaching decisions. CBE—Life Sciences Education, 14(1), Article 7. https://doi.org/10.1187/cbe.14-05-0084https://doi.org/10.1187/cbe.14-05-0084

Apkarian, N., Henderson, C., Stains, M., Raker, J., Johnson, E., & Dancy, M. (2021). What really impacts the use of active learning in undergraduate STEM education? Results from a national survey of chemistry, mathematics, and physics instructors. PLoS ONE, 16(2), e0247544. https://doi.org/10.1371/journal.pone.0247544https://doi.org/10.1371/journal.pone.0247544

Aragón, O. R., Eddy, S. L., & Graham, M. J. (2018). Faculty beliefs about intelligence are related to the adoption of active-learning practices. CBE—Life Sciences Education, 17(3), Article 47. https://doi.org/10.1187/cbe.17-05-0084https://doi.org/10.1187/cbe.17-05-0084

Austin, A. E. (2011, March 1). Promoting evidence-based change in undergraduate science education. National Academies National Research Council Board on Science Education. https://sites.nationalacademies.org/cs/groups/dbassesite/documents/webpage/dbasse_072578.pdfhttps://sites.nationalacademies.org/cs/groups/dbassesite/documents/webpage/dbasse_072578.pdf

Bathgate, M. E., Aragón, O. R., Cavanagh, A. J., Waterhouse, J. K., Frederick, J., & Graham, M. J. (2019). Perceived supports and evidence-based teaching in college STEM. International Journal of STEM Education, 6(1), Article 11. https://doi.org/10.1186/s40594-019-0166-3https://doi.org/10.1186/s40594-019-0166-3

Borda, E., Schumacher, E., Hanley, D., Geary, E., Warren, S., Ipsen, C., & Stredicke, L. (2020). Initial implementation of active learning strategies in large, lecture STEM courses: Lessons learned from a multi-institutional, interdisciplinary STEM faculty development program. International Journal of STEM Education, 7(1), Article 4. https://doi.org/10.1186/s40594-020-0203-2https://doi.org/10.1186/s40594-020-0203-2

Bouwma-Gearhart, J. (2012). Research university STEM faculty members’ motivation to engage in teaching professional development: Building the choir through an appeal to extrinsic motivation and ego. Journal of Science Education and Technology, 21(5), 558–570. https://doi.org/10.1007/s10956-011-9346-8https://doi.org/10.1007/s10956-011-9346-8

Braun, V., & Clarke, V. (2006). Using thematic analysis in psychology. Qualitative Research in Psychology, 3(2), 77–101. https://doi.org/10.1191/1478088706qp063oahttps://doi.org/10.1191/1478088706qp063oa

Brickman, P., Gormally, C., & Martella, A. M. (2016). Making the grade: Using instructional feedback and evaluation to inspire evidence-based teaching. CBE—Life Sciences Education, 15(4), Article 75. https://doi.org/10.1187/cbe.15-12-0249https://doi.org/10.1187/cbe.15-12-0249

Brownell, S. E., & Tanner, K. D. (2012). Barriers to faculty pedagogical change: Lack of training, time, incentives, and . . . tensions with professional identity? CBE—Life Sciences Education, 11(4), 339–346. https://doi.org/10.1187/cbe.12-09-0163https://doi.org/10.1187/cbe.12-09-0163

Callens, M. V., Kelter, P., Motschenbacher, J., Nyachwaya, J., Ladbury, J. L., & Semanko, A. M. (2019). Developing and implementing a campus-wide professional development program: Successes and challenges. Journal of College Science Teaching, 49(2), 68–75.

Canning, E. A., Muenks, K., Green, D. J., & Murphy, M. C. (2019). STEM faculty who believe ability is fixed have larger racial achievement gaps and inspire less student motivation in their classes. Science Advances, 5(2), eaau4734. https://doi.org/10.1126/sciadv.aau4734https://doi.org/10.1126/sciadv.aau4734

Carbone, A., Drew, S., Ross, B., Ye, J., Phelan, L., Lindsay, K., & Cottman, C. (2019). A collegial quality development process for identifying and addressing barriers to improving teaching. Higher Education Research & Development, 38(7), 1356–1370. https://doi.org/10.1080/07294360.2019.1645644https://doi.org/10.1080/07294360.2019.1645644

Cooper, K. M., Downing, V. R., & Brownell, S. E. (2018). The influence of active learning practices on student anxiety in large-enrollment college science classrooms. International Journal of STEM Education, 5(1), Article 23. https://doi.org/10.1186/s40594-018-0123-6https://doi.org/10.1186/s40594-018-0123-6

Cooper, M. M., Caballero, M. D., Ebert-May, D., Fata-Hartley, C. L., Jardeleza, S. E., Krajcik, J. S., Laverty, J. T., Matz, R. L., Posey, L. A., & Underwood, S. M. (2015). Challenge faculty to transform STEM learning. Science, 350(6258), 281–282. https://doi.org/10.1126/science.aab0933https://doi.org/10.1126/science.aab0933

Dancy, M. H., & Henderson, C. (2008). Barriers and promises in STEM reform. National Academies National Research Council Board on Science Education. https://sites.nationalacademies.org/cs/groups/dbassesite/documents/webpage/dbasse_072636.pdfhttps://sites.nationalacademies.org/cs/groups/dbassesite/documents/webpage/dbasse_072636.pdf

DeMarais, A., Bangera, G., Bronson, C., Byers, S., Davis, W., Linder, N., McFarland, J., Offerdahl, E., Otto, J., Pape-Lindstrom, P., Pollock, C., Reiness, G., Stavrianeas, S., & Wenderoth, M. P. (2022). What lies beneath? A systems thinking approach to catalyzing department-level curricular and pedagogical reform through the Northwest PULSE workshops. Transformative Dialogues: Teaching and Learning Journal, 14(3), Article 3. https://td.journals.psu.edu/td/article/view/1597https://td.journals.psu.edu/td/article/view/1597

Denaro, K., Kranzfelder, P., Owens, M. T., Sato, B., Zuckerman, A. L., Hardesty, R. A., Signorini, A., Aebersold, A., Verma, M., & Lo, S. M. (2022). Predicting implementation of active learning by tenure-track teaching faculty using robust cluster analysis. International Journal of STEM Education, 9(1), Article 49. https://doi.org/10.1186/s40594-022-00365-9https://doi.org/10.1186/s40594-022-00365-9

Derting, T. L., Ebert-May, D., Henkel, T. P., Maher, J. M., Arnold, B., & Passmore, H. A. (2016). Assessing faculty professional development in STEM higher education: Sustainability of outcomes. Science Advances, 2(3), e1501422. https://doi.org/10.1126/sciadv.1501422https://doi.org/10.1126/sciadv.1501422

Driessen, E. P., Knight, J. K., Smith, M. K., & Ballen, C. J. (2020). Demystifying the meaning of active learning in postsecondary biology education. CBE—Life Sciences Education, 19(4), Article 52. https://doi.org/10.1187/cbe.20-04-0068https://doi.org/10.1187/cbe.20-04-0068

Dweck, C. S. (1999). Self-theories: Their role in motivation, personality, and development. Psychology Press. https://doi.org/10.4324/9781315783048https://doi.org/10.4324/9781315783048

Ebert-May, D., Derting, T. L., Hodder, J., Momsen, J. L., Long, T. M., & Jardeleza, S. E. (2011). What we say is not what we do: Effective evaluation of faculty professional development programs. BioScience, 61(7), 550–558. https://doi.org/10.1525/bio.2011.61.7.9https://doi.org/10.1525/bio.2011.61.7.9

Eddy, S. L., Converse, M., & Wenderoth, M. P. (2015). PORTAAL: A classroom observation tool assessing evidence-based teaching practices for active learning in large science, technology, engineering, and mathematics classes. CBE—Life Sciences Education, 14(2), Article 23. https://doi.org/10.1187/cbe.14-06-0095https://doi.org/10.1187/cbe.14-06-0095

Freeman, S., Eddy, S. L., McDonough, M., Smith, M. K., Okoroafor, N., Jordt, H., & Wenderoth, M. P. (2014). Active learning increases student performance in science, engineering, and mathematics. Proceedings of the National Academy of Sciences, 111(23), 8410–8415. https://doi.org/10.1073/pnas.1319030111https://doi.org/10.1073/pnas.1319030111

Gormally, C., Evans, M., & Brickman, P. (2014). Feedback about teaching in higher ed: Neglected opportunities to promote change. CBE—Life Sciences Education, 13(2), 187–199. https://doi.org/10.1187/cbe.13-12-0235https://doi.org/10.1187/cbe.13-12-0235

Handelsman, J., Ebert-May, D., Beichner, R., Bruns, P., Chang, A., DeHaan, R., Gentile, J., Lauffer, S., Stewart, J., Tilghman, S. M., & Wood, W. B. (2004). Scientific teaching. Science, 304(5670), 521–522. https://doi.org/10.1126/science.1096022https://doi.org/10.1126/science.1096022

Harvey, C., Eshleman, K., Koo, K., Smith, K. G., Paradise, C. J., & Campbell, A. M. (2016). Encouragement for faculty to implement Vision and Change. CBE—Life Sciences Education, 15(4), es7. https://doi.org/10.1187/cbe.16-03-0127https://doi.org/10.1187/cbe.16-03-0127

Henderson, C., Beach, A., & Finkelstein, N. (2011). Facilitating change in undergraduate STEM instructional practices: An analytic review of the literature. Journal of Research in Science Teaching, 48(8), 952–984. https://doi.org/10.1002/tea.20439https://doi.org/10.1002/tea.20439

Henderson, C., & Dancy, M. H. (2007). Barriers to the use of research-based instructional strategies: The influence of both individual and situational characteristics. Physical Review Special Topics—Physics Education Research, 3(2), 020102. https://doi.org/10.1103/PhysRevSTPER.3.020102https://doi.org/10.1103/PhysRevSTPER.3.020102

Herman, G. L., Greene, J. C., Hahn, L. D., Mestre, J. P., Tomkin, J. H., & West, M. (2018). Changing the teaching culture in introductory STEM courses at a large research university. Journal of College Science Teaching, 47(6), 32–38.

Hjelmstad, K. L., Hjelmstad, K. D., Krause, S. J., Mayled, L. H., Judson, E., Ross, L., Culbertson, R., Middleton, J. A., Ankeny, C. J., & Chen, Y.-C. (2018, June 24–27). Facilitating change in instructional practice in a faculty development program through classroom observations and formative feedback coaching [Paper presentation]. ASEE Annual Conference and Exposition, Salt Lake City, Utah, United States. https://doi.org/10.18260/1-2--30505https://doi.org/10.18260/1-2--30505

Jackson, M. A., Moon, S., Doherty, J. H., & Wenderoth, M. P. (2022). Which evidence-based teaching practices change over time? Results from a university-wide STEM faculty development program. International Journal of STEM Education, 9(1), Article 22. https://doi.org/10.1186/s40594-022-00340-4https://doi.org/10.1186/s40594-022-00340-4

Jacobson, W., & Cole, R. (2020). Motivations and obstacles influencing faculty engagement in adopting teaching innovations. To Improve the Academy: A Journal of Educational Development, 39(1). https://doi.org/10.3998/tia.17063888.0039.106https://doi.org/10.3998/tia.17063888.0039.106

Kezar, A. (2014). Higher education change and social networks: A review of research. The Journal of Higher Education, 85(1), 91–125. https://doi.org/10.1353/jhe.2014.0003https://doi.org/10.1353/jhe.2014.0003

Kim, A. M., Speed, C. J., & Macaulay, J. O. (2019). Barriers and strategies: Implementing active learning in biomedical science lectures. Biochemistry and Molecular Biology Education, 47(1), 29–40. https://doi.org/10.1002/bmb.21190https://doi.org/10.1002/bmb.21190

Knight, J. K., Wise, S. B., & Sieke, S. (2016). Group random call can positively affect student in-class clicker discussions. CBE—Life Sciences Education, 15(4), Article 56. https://doi.org/10.1187/cbe.16-02-0109https://doi.org/10.1187/cbe.16-02-0109

Kwasnicka, D., Dombrowski, S. U., White, M., & Sniehotta, F. F. (2015). Data-prompted interviews: Using individual ecological data to stimulate narratives and explore meanings. Health Psychology, 34(12), 1191–1194. https://doi.org/10.1037/hea0000234https://doi.org/10.1037/hea0000234

Lane, A. K., Skvoretz, J., Ziker, J. P., Couch, B. A., Earl, B., Lewis, J. E., McAlpin, J. D., Prevost, L. B., Shadle, S. E., & Stains, M. (2019). Investigating how faculty social networks and peer influence relate to knowledge and use of evidence-based teaching practices. International Journal of STEM Education, 6(1), Article 28. https://doi.org/10.1186/s40594-019-0182-3https://doi.org/10.1186/s40594-019-0182-3

Lombardi, D., Shipley, T. F., Astronomy Team, Biology Team, Chemistry Team, Engineering Team, Geography Team, Geoscience Team, & Physics Team. (2021). The curious construct of active learning. Psychological Science in the Public Interest, 22(1), 8–43. https://doi.org/10.1177/1529100620973974https://doi.org/10.1177/1529100620973974

Macaluso, R., Amaro-Jiménez, C., Patterson, O. K., Martinez-Cosio, M., Veerabathina, N., Clark, K., & Luken-Sutton, J. (2021). Engaging faculty in student success: The promise of active learning in STEM faculty in professional development. College Teaching, 69(2), 113–119. https://doi.org/10.1080/87567555.2020.1837063https://doi.org/10.1080/87567555.2020.1837063

Manduca, C. A., Iverson, E. R., Luxenberg, M., Macdonald, R. H., McConnell, D. A., Mogk, D. W., & Tewksbury, B. J. (2017). Improving undergraduate STEM education: The efficacy of discipline-based professional development. Science Advances, 3(2), e1600193. https://doi.org/10.1126/sciadv.1600193https://doi.org/10.1126/sciadv.1600193

Marbach-Ad, G., & Rietschel, C. H. (2016). A case study documenting the process by which biology instructors transition from teacher-centered to learner-centered teaching. CBE—Life Sciences Education, 15(4), Article 62. https://doi.org/10.1187/cbe.16-06-0196https://doi.org/10.1187/cbe.16-06-0196

McConnell, M., Montplaisir, L., & Offerdahl, E. (2020). Meeting the conditions for diffusion of teaching innovations in a university STEM department. Journal for STEM Education Research, 3(1), 43–68. https://doi.org/10.1007/s41979-019-00023-whttps://doi.org/10.1007/s41979-019-00023-w

Metzger, K. J., & Langley, D. (2020). The room itself is not enough: Student engagement in active learning classrooms. College Teaching, 68(3), 150–160. https://doi.org/10.1080/87567555.2020.1768357https://doi.org/10.1080/87567555.2020.1768357

Metzger, K. J., & Via, Z. (2022). Warming up the cold call: Encouraging classroom inclusion by considering warm- & cold-calling techniques. The American Biology Teacher, 84(6), 342–346. https://doi.org/10.1525/abt.2022.84.6.342https://doi.org/10.1525/abt.2022.84.6.342

Pfund, C., Miller, S., Brenner, K., Bruns, P., Chang, A., Ebert-May, D., Fagen, A. P., Gentile, J., Gossens, S., Khan, I. M., Labov, J. B., Pribbenow, C. M., Susman, M., Tong, L., Wright, R., Yuan, R. T., Wood, W. B., & Handelsman, J. (2009). Summer institute to improve university science teaching. Science, 324(5926), 470–471. https://doi.org/10.1126/science.1170015https://doi.org/10.1126/science.1170015

Prince, M. (2004). Does active learning work? A review of the research. Journal of Engineering Education, 93(3), 223–231. https://doi.org/10.1002/j.2168-9830.2004.tb00809.xhttps://doi.org/10.1002/j.2168-9830.2004.tb00809.x

Ruiz-Primo, M. A., Briggs, D., Iverson, H., Talbot, R., & Shepard, L. A. (2011). Impact of undergraduate science course innovations on learning. Science, 331(6022), 1269–1270. https://doi.org/10.1126/science.1198976https://doi.org/10.1126/science.1198976

Rutz, C., Condon, W., Iverson, E. R., Manduca, C. A., & Willett, G. (2012). Faculty professional development and student learning: What is the relationship? Change: The Magazine of Higher Learning, 44(3), 40–47.

Schuster, J. H., & Finkelstein, M. J. (2006). The American faculty: The restructuring of academic work and careers. Johns Hopkins University Press.

Shadle, S. E., Marker, A., & Earl, B. (2017). Faculty drivers and barriers: Laying the groundwork for undergraduate STEM education reform in academic departments. International Journal of STEM Education, 4(1), Article 8. https://doi.org/10.1186/s40594-017-0062-7https://doi.org/10.1186/s40594-017-0062-7

Stains, M., Harshman, J., Barker, M. K., Chasteen, S. V., Cole, R., DeChenne-Peters, S. E., Eagan, M. K., Jr., Esson, J. M., Knight, J. K., Laski, F. A., Levis-Fitzgerald, M., Lee, C. J., Lo, S. M., McDonnell, L. M., McKay, T. A., Michelotti, N., Musgrove, A., Palmer, M. S., Plank, K. M., . . . Young, A. M. (2018). Anatomy of STEM teaching in North American universities. Science, 359(6383), 1468–1470. https://doi.org/10.1126/science.aap8892https://doi.org/10.1126/science.aap8892

Sturtevant, H., & Wheeler, L. (2019). The STEM Faculty Instructional Barriers and Identity Survey (FIBIS): Development and exploratory results. International Journal of STEM Education, 6(1), Article 35. https://doi.org/10.1186/s40594-019-0185-0https://doi.org/10.1186/s40594-019-0185-0

Theobald, E. J., Hill, M. J., Tran, E., Agrawal, S., Arroyo, E. N., Behling, S., Chambwe, N., Cintrón, D. L., Cooper, J. D., Dunster, G., Grummer, J. A., Hennessey, K., Hsiao, J., Iranon, N., Jones, L., Jordt, H., Keller, M., Lacey, M. E., Littlefield, C. E., … Freeman, S. (2020). Active learning narrows achievement gaps for underrepresented students in undergraduate science, technology, engineering, and math. Proceedings of the National Academy of Sciences, 117(12), 6476–6483. https://doi.org/10.1073/pnas.1916903117https://doi.org/10.1073/pnas.1916903117

Turpen, C., Dancy, M., & Henderson, C. (2016). Perceived affordances and constraints regarding instructors’ use of Peer Instruction: Implications for promoting instructional change. Physical Review Physics Education Research, 12(1), 010116. https://doi.org/10.1103/physrevphyseducres.12.010116https://doi.org/10.1103/physrevphyseducres.12.010116

Viskupic, K., Ryker, K., Teasdale, R., Manduca, C., Iverson, E., Farthing, D., Bruckner, M. Z., & McFadden, R. (2019). Classroom observations indicate the positive impacts of discipline-based professional development. Journal for STEM Education Research, 2(2), 201–228. https://doi.org/10.1007/s41979-019-00015-whttps://doi.org/10.1007/s41979-019-00015-w

Vygotsky, L. S. (1978). The development of higher psychological processes (M. Cole, V. John-Steiner, S. Scribner, & E. Souberman, Eds.). Harvard University Press.

Waugh, A. H., & Andrews, T. C. (2020). Diving into the details: Constructing a framework of random call components. CBE—Life Sciences Education, 19(2), Article 14. https://doi.org/10.1187/cbe.19-07-0130https://doi.org/10.1187/cbe.19-07-0130

Wlodarsky, R. (2005). The professoriate: Transforming teaching practices through critical reflection and dialogue. Teaching and Learning: The Journal of Natural Inquiry & Reflective Practice, 19(3), 156–172.

Wood, A. K., Christie, H., MacKay, J. R. D., & Kinnear, G. (2022). Using data about classroom practices to stimulate significant conversations and aid reflection. International Journal for Academic Development. Advance online publication. https://doi.org/10.1080/1360144X.2022.2103817https://doi.org/10.1080/1360144X.2022.2103817

Yik, B. J., Raker, J. R., Apkarian, N., Stains, M., Henderson, C., Dancy, M. H., & Johnson, E. (2022). Evaluating the impact of malleable factors on percent time lecturing in gateway chemistry, mathematics, and physics courses. International Journal of STEM Education, 9(1), Article 15. https://doi.org/10.1186/s40594-022-00333-3https://doi.org/10.1186/s40594-022-00333-3

Appendix A. Interview Questions

-

Background information:

How many years have you been teaching at this institution or elsewhere?

Have you done any professional development for teaching prior to CAUSE?

-

General impressions:

What was your primary motivation for enrolling in CAUSE?

What was the most valuable thing you learned from CAUSE meetings?

How would you describe the community aspect of the meetings?

Did you talk to faculty in your department about CAUSE outside of meetings (either members of CAUSE or not)?

What kind of conversations did this elicit?

-

Teaching reflection:

Were you using any evidence-based teaching practices prior to enrolling in CAUSE?

What kind of strategies were you using?

Were there other teaching strategies that you had heard of or were familiar with?

When you thought about implementing evidence-based teaching, what were your barriers and benefits?

Show bar and line graphs

Talk about meaningful patterns over time

Why did you change your teaching in this way?

How did the students react to evidence-based teaching?

Did you have any pushback?

-

Feedback:

What are the most important things for your students to get out of your course? Is this varied among your courses?

What was the thing that most supported your implementation of active learning?

What additional things could CAUSE have offered to support your implementation of active learning?

Do you have specific suggestions for the CAUSE program?

What support would you have liked to receive in addition to the meetings, feedback on teaching practices, and student exam performance?

Appendix B PORTAAL Practices

PORTAAL code name |

PORTAAL practice |

Instance (I) / duration (D) |

|---|---|---|

Act_Q |

Student asks a question during an activity |

I |

Activities |

Individual or group activities in class |

I |

Alone |

Students work alone |

I |

Alt_Ans |

Providing alternative answers to a question |

I |

DB |

Debrief of a question |

D |

HB |

High Bloom’s in-class activities |

I |

Ins_Ans |

Instructor provides answer to a question |

I |

Ins_Exp |

Instructor explains the answer to a question |

I |

MCQ |

Students work on a multiple choice question |

I |

Pos_FB |

Instructor use of positive feedback to students |

I |

Prom_Log |

Instructors prompting logic from students |

I |

RC_Ans |

Instructors randomly call on students for answers |

I |

RC_Exp |

Randomly called students explain answers |

I |

SA |

Students work on short answer question |

I |

SG |

Students work in small groups |

I |

Spon_Q |

Student questions outside of activity (spontaneous) |

I |

ST_DB |

Student time in debrief of a question |

D |

Total_Q |

Total student questions in class |

I |

TST |

Total student time during class |

D |

Vol_Ans |

Student volunteer answers a question |

I |

Vol_Exp |

Student volunteer explains answer to a question |

I |

Voting |

Students use a voting system to share answers |

I |

WC_Ans |

Whole class answers a question |

I |

Unit of instance: n times. Unit of duration: minutes.